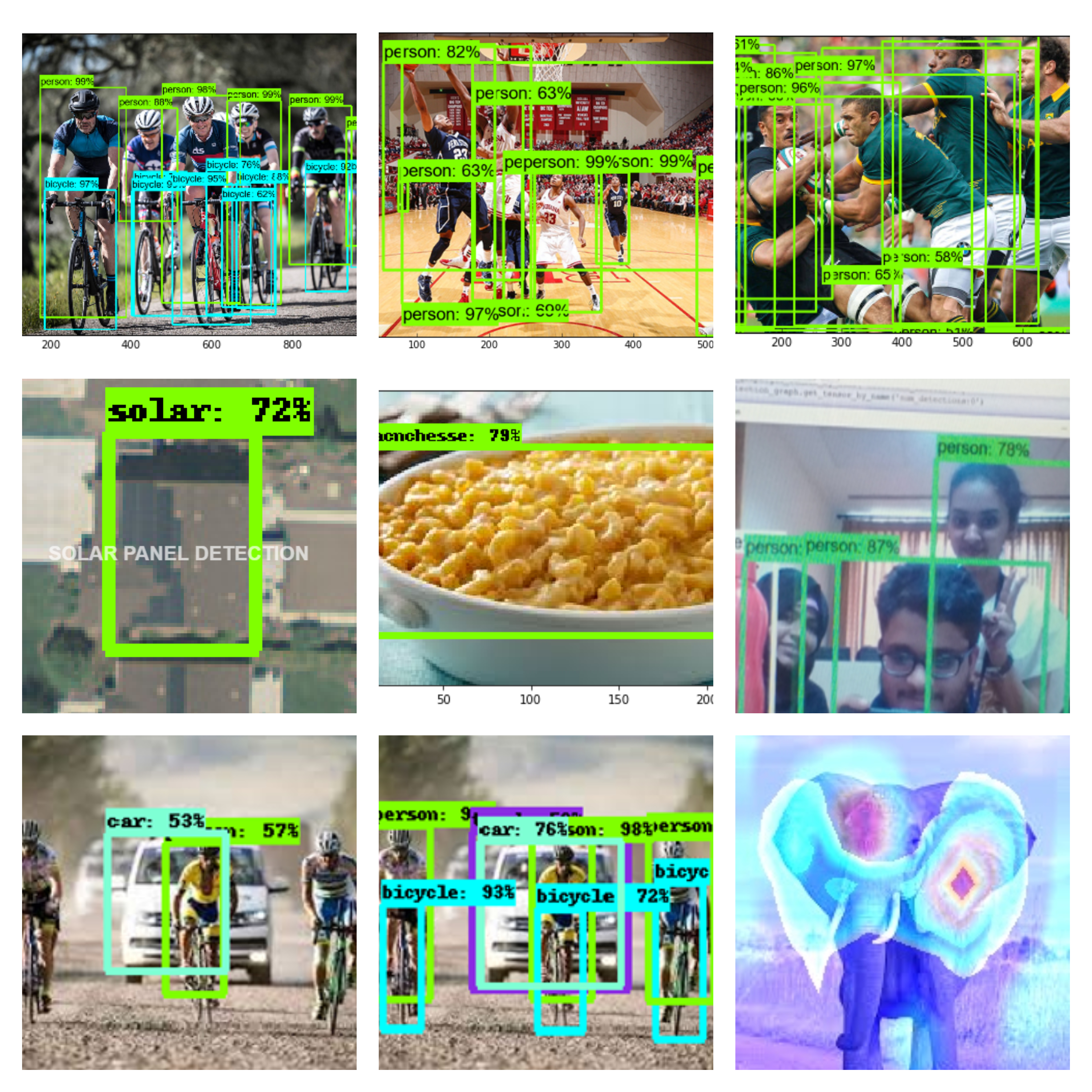

Object detection is the machine proficiency to be able to accurately recognize and localize multiple objects present in both images and videos. It involves drawing the boundary box around the detected object with the probability distribution measuring the degree of similarity. Object detection is one among the many interesting tasks under Computer Vision. A large community of researchers have been working very hard in developing the reliable, efficient and accurate object detection models, but the advancement of deep learning, due to very high computational power as well as the massive amount of data, replaced the traditional approaches of performing computer vision tasks by deep learning models. In this project, we have studied in detail about trending deep learning models for performing real-time object detection, visualized and investigated speed and accuracy of different object detection models on both videos and images. We have also performed the task of image classification by using transfer learning technique and have visualized various intermediate featuremaps of the model.

Object detection is the machine proficiency to be able to accurately recognize and localize multiple objects present in both images and videos. It involves drawing the boundary box around the detected object with the probability distribution measuring the degree of similarity. Object detection is one among the many interesting tasks under Computer Vision. A large community of researchers have been working very hard in developing the reliable, efficient and accurate object detection models, but the advancement of deep learning, due to very high computational power as well as the massive amount of data, replaced the traditional approaches of performing computer vision tasks by deep learning models. In this project, we have studied in detail about trending deep learning models for performing real-time object detection, visualized and investigated speed and accuracy of different object detection models on both videos and images. We have also performed the task of image classification by using transfer learning technique and have visualized various intermediate featuremaps of the model.

After the re-birth of deep learning based models and due to the availability of immense amount of data as well as very high computation power, most of the areas of computer science have seen paradigm shift by making their traditional approaches obsolete [3]. Computer vision is one of the fields which is affected a lot by the rise of deep learning. Researchers realized the importance of data in order to address the computer vision tasks as a result, in 2009 IMAGENET database has been introduced [4], consisting of 15 million images belonging to 22000 classes. In 2012, a research paper entitled “ImageNet classification with deep convolutional neural network”, has been proposed by Alex Krizhevsky, Geoffrey Hinton et.al which produced astonishing result and managed to win annual IMAGENET challenge (International platform for measuring the performance of computer vision models) by allowing the error rate to fall up to 15% from 26% [5]. Convolutional Neural Network (CNN's or ConvNets) based models played a significant role in addressing the computer vision tasks like image classification, object detection, and image segmentation. ConvNets models are introduced by the researchers Yan Le Cunn and others in 1990, which tried to mimic or imitate the working of human brain, here we have a neuron-like node which receives the information from the previous set of nodes, performs some computation (non-linear operation) and forwards the generated outputs to the successive nodes. Each layer in the ConvNets consist of definite number of neurons dedicated for performing distinct jobs (Convolution involves performing corresponding element-wise multiplication between filter and input image, Activation function introduces the non-linearity in the model, Max-pooling performs dimensionality reduction and Classification layer or Fully connected layers), thus the model will manage to learn more and more abstract representation of the input image which helps to perform classification/object detection/localization [6]. We can say that now there are no computer vision problems left out which has not been addressed by deep learning models.In literature, Facebook AI Researcher (FAIR) Ross Girshreik has made a significant job in developing deep learning based object detection models. In 2014, Regional Convolutional Neural Network model has been proposed. It is a dual phase object detection model for performing object detection followed by Fast R-ConvNet and Faster R-ConvNet, these models recorded good accuracy but took really long time for training and are slow in detection which made difficult to apply these models in real time. In 2016, Redmon introduced You Look Only Once Model (YOLO), which is a single phase object detection model which is capable of putting the boundary box around the object with the confidence score. This is one of the top performing object detection model for performing real-time object detection with only 25-millisecond latency. We have performed object detection by using various dual phase and single phase object detection models on both images and live videos and analysed their speed and accuracy.

In this section, we have discussed traditional approaches (techniques that followed to address computer vision tasks before the entry of deep learning models). Several feature extraction techniques like Viola and Jones algorithm face recognition, Histogram Oriented Gradients, Scale Invariant Feature Transform, Speedup Robust Transform has been proposed but the upswing of deep learning models substituted all these traditional methods of performing computer vision tasks.

Viola and Jones proposed probably the first real-time object detection model in 2001. This model was most widely used for performing face detection [7]. The model uses Haar-like features for performing detection. It requires the proper front view of the face for better performance (The model was unable to detect the tilted objects). The working of the Viola and Jones algorithm is as follows:1) Appropriate window size and window steps are chosen.2) Sliding window approach is followed by moving the window in horizontal and vertical direction, at the same time N number of filters for face recognition are applied. If the image contains a face, then one of the filters will give high value. If the faces are not detected, then window size and window steps are increased and then again step 2 is followed. This model took less time for training but showed very good detection accuracy. The advantages of Viola-Jones model are 1) High detection accuracy 2) Robust nature 3) Real-time.

Histogram Oriented Gradients or HOG is the feature extraction technique used for object detection [8]. HOGs are the manually created features, introduced by Dalal and Triggs in 2005. This model was on fire during 2008-2011. The working of HOG is as follows. It converts pixel values of the image into gradients (gradients are nothing but derivatives or the rate of change) which gives the pixel intensity change in that particular location. The histogram represents the distribution of numerical data. In HOG image patch is considered, oriented gradients are calculated for the same, then the histogram of orientation is plotted. This will give a clear insight of gradients with a particular orientation. HOG is widely used for pedestrian detection systems.

Scale Invariant Feature Transform or SIFT is introduced by David Lowe in 1999. This technique is capable of detecting local features present in the image [9]. SIFT technique collects some of the interesting features which describes the query object. This information collected during training are later used in detecting the real-time objects, but to perform more reliable detection the gathered features must have the ability to detect the objects in spite of the change in noise, illumination, and scale. SIFT is one of the best methods for collecting high-level features but this approach is very slow, so it is difficult to apply on real-time recognition. SIFT finds its application in 3d modelling, gesture recognition, video tacking etc.

Bay. H, Tuytelaars. T and Van Gool introduced Speedup Robust Features or SURF in 2006. SURF is greatly influenced by the Scale Invariant Feature Transition (SIFT) [10]. SURF technique involves extracting the most important features which help to perform object recognition. SURF approximates the integer of the determinant of Hessian blob detector. SURF performed faster when compared with SIFT.SURF is widely used in the computer vision tasks like object detection, 3D image reconstruction, and classification tasks.

Haar features are proposed by the Viola and Jones, which aimed at reducing the computation cost, because dealing with images with intensities (high pixel values) took more time for execution, in order to reduce the computation cost, Haar-like features are introduced which makes the whole process computationally inexpensive [11]. The Haar like feature mechanism works as follows, it accounts 4 rectangular adjacent regions from the particular location (or image patch) and adds up the pixel values present in all the regions and then the difference between their sums are calculated. Advantages of using Haar-like features are 1) Speed 2) Haar-like features can be calculated for variable sized objects.

Object detection is the building block of computer vision. Object detection involves assigning the labels (classification) for the multiple objects and also localizing objects present in the image (localization). Object detection (Classification + Localizing, multiple objects present in the image) models represent the images in the hierarchical order with increasing degree of abstraction, so that all the objects present in the images are recognized accurately [12]. There are two types of object detection models 1) One phase object detection models and 2) Dual phase object detection models.

Single stage object detection models include only one deep neural network, which is capable of performing object detection. These type of models does not include separate stages like object proposal generation (which will give the possible regions of the query image which contains objects.) and resampling stages (refining the boundary boxes and identifying fake detections) for performing object detection, instead all these stages are packed up in a single neural network, this will reduce the computation cost and increase the performance. One phase object detection models aimed at speed but they are having poor accuracy. These models involve the high speed of training and efficiency in deployment. Since the model contains only one network end to end optimization can be achieved. Some of the models under one phase object detection models are You Look Only Once (YOLO) and Single Shot Detector (SSD).

In literature, Single Shot Detector has been proposed [13] which includes one single deep neural network capable of detecting objects present in the image. The model will make use of the predictions produced by all the feature maps with a variable resolution to deal with objects of different size. SSD does not include region proposals and resampling stages instead of, the model packs all these stages together in a single pipeline. This decreased the model computation time and increased the performance to a greater extent. Since SSD is a single pipelined model training is easy and can be directly integrated with other systems that require object detection module. SSD used a convolutional filter for predicting the object category and bounding boxes. SSD was very fast but less accurate when compared to two stage object detection models. The central idea of SSD is to predict the scores for the category and box offsets for a fixed set of default bounding boxes with the help of small ConvNet-filters applied to the feature maps. Experiments show that SSD produced good results on datasets like MSCOCO, PASCAL VOC, ILSVRC.

In order to speed-up single stage object detection models, You Look Only Once model has been introduced [14]. It is a single neural network which can predict anchor boxes for every object present in the query image and also the confidence score. Since the model consists of single feedforward network optimization can be achieved. YOLO takes an entire image as an input during training and directly optimizes the detection performance. Fig. 2.1 shows the workflow of YOLO.YOLO object detection pipeline includes three stages, 1. The model resizes the image to 448*448 pixels. 2. Passing the image to single pipelined ConvNet. 3. Classification. However, YOLO object detection model could not reach the accuracy level of dual phase object detection models. It is having several advantages over traditional methods of object detection. This model processes frame relatively at a higher rate than any other object detection models. Base-YOLO processes 45 frames/second whereas, Fast-YOLO processes 150 frames/second. YOLO is one of the most preferred model for performing object detection on live video feed with less than 25 milliseconds of latency.

Dual-phase object detection models involve object proposal generation methods followed by decision refinement. ConvNet based object proposal generation has been successful in performing object detection. Top models of object detection like R-ConvNet used Selective Search (SS) to generate most probable regions of the image which contains objects, and ConvNet for feature extraction and feeding the extracted features into the classifier. The next stage after the classification is about refining of the anchor-boxes, recognizing the spurious detections and regenerating the confidence score on the basis of detection of other objects in the image. Since this model involves two stages it is very difficult to train and optimize. However, dual-phase object detection models produced results with very high accuracy.

A region based convolutional neural network model has been proposed [15] in the literature, for performing object detection. This traditional R-ConvNet is based on identifying the possible regions of the image that contains objects and then running classifier to identify the distinct objects. The key features of R-ConvNet are 1) High capacity ConvNet for identifying different objects and 2) Using supervised learning method when there is a requirement of labeled training data to get more accurate results. Fig. 2.2 shows the working of R-ConvNet.This model, 1) Accepts images with size 227*227 pixels 2) Uses Selective Search for extracting 2000 bottom-up region proposals 3) Finding the features for each of the proposal with the help of large ConvNet (Local region-based approach) and then 4) L-SVM (Linear Support Vector Machine) is used for performing classification. This task was very difficult to achieve and also the results of R-ConvNet was very poor because convolution should be performed on each of the 2000 regions proposal generated by Selective Search, this made the model very slow. Some of the disadvantages of R-CNN model are, 1) Multistage training 2) Training is time-consuming.3) Very slow object detection.

In literature, Fast regional convolution neural network model (Fast R-ConvNet) has been introduced [16], which is significantly more powerful and efficient than R-ConvNet. Fast R-ConvNet takes very less time for computation and increases the object detection accuracy, due to the following reasons 1) Features are extracted from the image before producing the object proposals, thus only one CNN need to be run over the entire image instead of 2000 CNN’s over 2000 proposed regions (since we are extracting features from the full images, it is also called as global region based method) 2) SVM is replaced by softmax activation function, then for each of the object, Region of Interest (RoI) will perform the job of extracting features from feature map. Thus obtained features are passed to the sequence of fully connected layers. This approach made the model fast, and further fully connected layer and Region of Interest (ROI) made the model end-to-end trainable. Fig. 2.3 shows the workflow of Fast R-ConvNet. Advantages of the proposed model are 1) High object detection accuracy. 2)Training will result in the updating all the network layers. 3)Feature caching don’t require any kind of storage. Fast R-ConvNet produced astonishing results on PASCAL VOC07, 2010, and 2012.

In order to accelerate object detection, Faster regional convolution neural network model (Faster R-ConvNet) has been proposed [17]. Faster R-ConvNet aimed at replacing Selective Search, which is used for object proposal generation (one among the major shortcoming of Fast R-ConvNet due to its poor speed) and also to make the model end-to-end trainable. The essence behind Faster R-ConvNet model was the relationship between the region proposals and image-features which were extracted by passing ConvNet. The researchers have made use of same ConvNet features for recognizing the most probable regions of the image which contains objects rather than using Selective Search algorithm. So overall only one CNN need to be trained. Fig. 2.4 shows the working of Faster R-ConvNet.Regional proposal networks (RPNs) were proposed. It is a fully connected ConvNet which runs on top of the extracted ConvNet based image-features and produces bounding boxes around the detected objects along with the probability distribution. Faster R-ConvNet reduced the computation cost and gave good results on PASCAL VOC dataset.

Mask R-ConvNet or Mask regional convolution neural network model has been introduced [18], which is designed for performing segmentation at a pixel level. Mask R-ConvNet is the improved version of Faster R-ConvNet having the additional layer called Mask, which is capable of predicting whether a pixel is a part of an object or not. Fig. 2.5 shows the workflow of Mask R-ConvNet. Mask is a fully connected Convolutional Neural Network built upon feature maps generated by the ConvNet. Working of Mask R-ConvNet is as follows, it takes ConvNet based feature map as the input and generates the matrix with 1’s if that pixel belongs to the same object or else it produces 0. Mask R-CNN produced very good results on Microsoft COCO dataset. In Fig. 2.6 we have listed all the traditional and deep learning approach to deal with computer vision tasks.