Everyone's been there. Builds start off fast and get slower over time, until you're staring at a 30-minute job and wondering what went wrong. That's no different with GitLab, where hosted and Kubernetes-based runners are the default option.

We recently took a call with a team at an online trading platform. Their average build time was about 10 minutes, and they wanted to claw back at least 2 minutes. Whilst we didn't have access to their proprietary code, we did find and build a real-world e-commerce project called nopCommerce.

On GitLab.com's hosted runners, the build took 9m50s, which made it a reasonable proxy for the kind of build they were dealing with. We managed to get that build running in 3m59s, saving roughly 6 minutes.

Across a team with 250 developers, that's roughly 67 eight-hour working days saved every month.

This matters even more now that AI agents can generate code faster than humans can read or review it.

Almost 10 min build time with GitLab's medium runner

Our fastest run with actuated on bare-metal, just under 4 minutes

Our fastest run for the unmodified code was 4m42s just by switching to actuated and a bare-metal server from Hetzner. We managed to drop a further 43 seconds by applying an optimisation that is not available for Kubernetes runners, and out of reach for most teams, with a final build time of 3m59s.

| GitLab.com | Actuated bare-metal | Actuated bare-metal optimized |

|---|---|---|

| 9m50s | 4m42s | 3m59s |

The source code for the project is available on our public GitLab group: https://gitlab.com/actuated/nopcommerce-ci-demo/.

Laying the foundations

When teams deploy to Kubernetes or ECS in production, they tend to build and publish container images using Docker or BuildKit. The nopCommerce sample app already had a Dockerfile, which made for a good start.

We put together a basic GitLab CI pipeline with three stages, with a waterfall approach:

- Build - build within a .NET Core container image, to catch high-level errors

- Test - test within a .NET Core container image

- Docker - providing the build and test jobs completed, an image is built with Docker

We left off the publish step because it adds a variable amount of time depending on the networking. We wanted to focus on the straight-line speed with as few variables as possible.

# nopCommerce CI/CD Pipeline

# Expected total time: ~8-12 minutes

#

# Breakdown:

# build: ~4-6 min (restore + full solution with 28 plugins)

# test: ~2-3 min (NUnit test suite with SQLite in-memory)

# docker: ~2-3 min (multi-stage Docker build)

stages:

- build

- test

- docker

variables:

DOTNET_CLI_TELEMETRY_OPTOUT: "true"

DOTNET_NOLOGO: "true"

SOLUTION_DIR: "src"

CONFIGURATION: "Release"

# ---------------------------------------------------------------------------

# Stage 1: Restore + Build the entire solution (Release configuration)

# Restore and build are combined because NuGet packages are stored in the

# global packages folder (~/.nuget/packages) which cannot be shared between

# jobs via artifacts.

# ---------------------------------------------------------------------------

build:

stage: build

image: mcr.microsoft.com/dotnet/sdk:9.0-alpine

tags:

- saas-linux-medium-amd64

script:

- echo "Restoring NuGet packages and building nopCommerce solution (${CONFIGURATION})..."

- dotnet build -c ${CONFIGURATION} ${SOLUTION_DIR}

artifacts:

paths:

- ${SOLUTION_DIR}/**/bin/

- ${SOLUTION_DIR}/**/obj/

expire_in: 1 hour

# ---------------------------------------------------------------------------

# Stage 2: Run NUnit tests with SQLite in-memory database

# Tests cover Core, Data, Services, and Web layers

# ---------------------------------------------------------------------------

test:

stage: test

image: mcr.microsoft.com/dotnet/sdk:9.0-alpine

tags:

- saas-linux-medium-amd64

needs:

- job: build

artifacts: true

script:

- echo "Running test suite..."

- dotnet test --no-build -c ${CONFIGURATION} --verbosity normal ${SOLUTION_DIR}

artifacts:

when: always

reports:

junit: ${SOLUTION_DIR}/**/TestResults/*.xml

# ---------------------------------------------------------------------------

# Stage 3: Build Docker image

# Multi-stage Dockerfile: builds the entire solution inside Docker

# ---------------------------------------------------------------------------

docker:

stage: docker

image: docker:latest

tags:

- saas-linux-medium-amd64

services:

- docker:dind

variables:

DOCKER_TLS_CERTDIR: "/certs"

needs:

- job: test

script:

- echo "Building Docker image..."

- docker build -t nopcommerce:${CI_COMMIT_SHORT_SHA} -t nopcommerce:latest .

- docker images nopcommerce

For each test run, we changed the tags from saas-linux-medium-amd64 to actuated-4cpu-8gb.

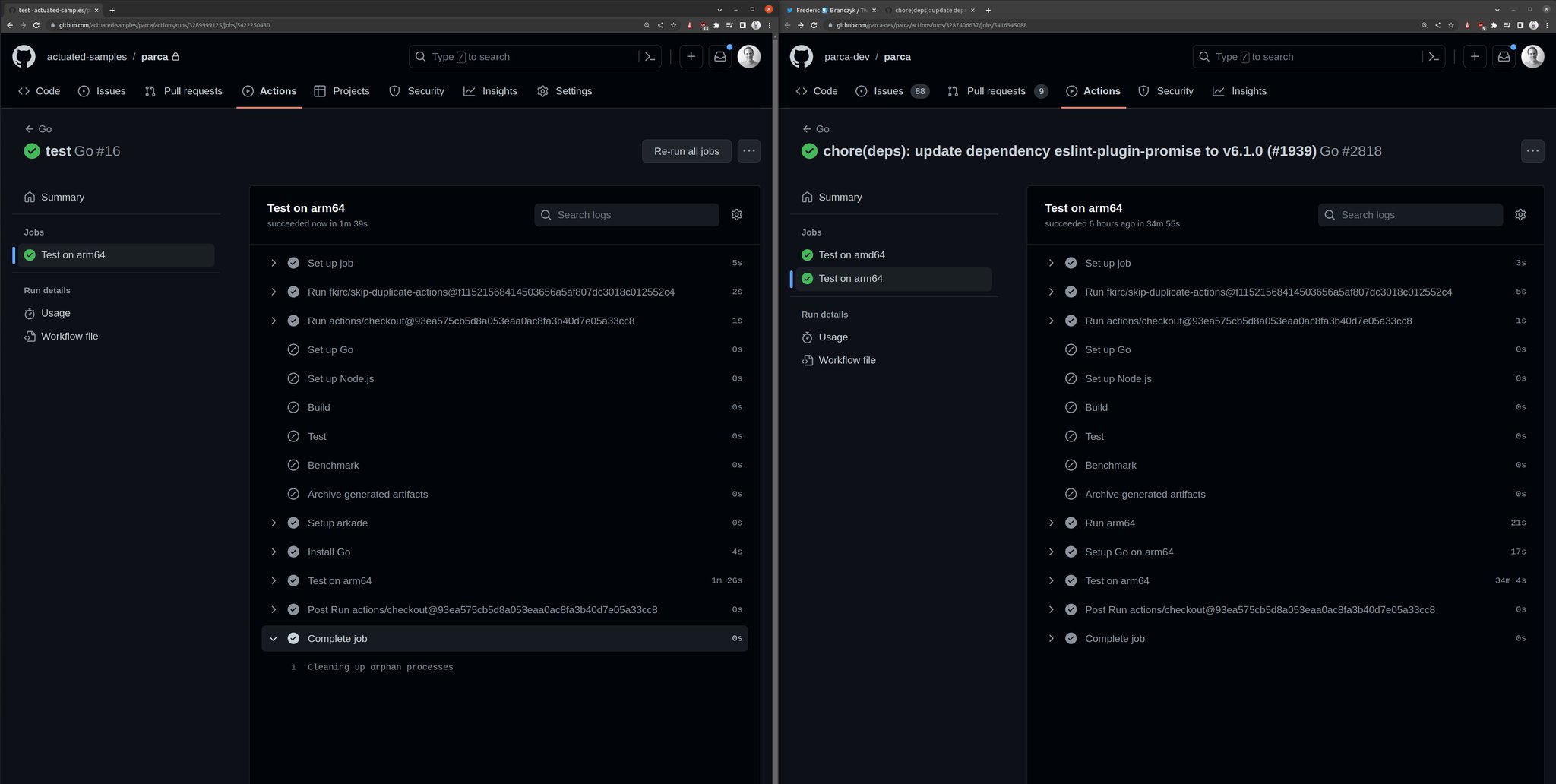

The actuated agent starts an ephemeral microVM per build, which gets registered against the project, and performs only a single build.

This tends to take around 1-2 seconds from the event being published by GitLab to seeing a build running.

So most of the savings came simply from switching from GitLab's hosted runners to actuated and a machine we set up to build projects under our group.

Finding more speed

If you look carefully, there's a flaw built into every GitLab job.

On Kubernetes, the runner itself is running within a container already, then yet another container is started docker:dind with a nested Docker engine running within that. Unfortunately, to make that happen privileged Pods are required, which GitLab itself warns about during installation.

On top of that, not only are they insecure by default, but they're slow because the native filesystem used by Docker and container runtimes, overlayfs, does not work when nested. So the worst possible storage driver gets used: VFS.

Unlike overlayfs, which is a high-performance Copy on Write (CoW) filesystem, VFS copies every file it touches, which as you can see amounted to at least 40 seconds of wasted I/O. Builds tend to be heavy on I/O, so you get the worst of both worlds.

With actuated, GitLab's runner starts inside a microVM, with a dedicated OS and systemd available. There's no nesting of Docker, a private Docker daemon runs directly alongside the runner.

We can access this via a shell executor step instead of a container step. It avoids downloading the large docker:dind image, avoids the overhead of having to start a second Docker daemon, and avoids using the VFS filesystem.

# ---------------------------------------------------------------------------

# Stage 3: Build Docker image

# Multi-stage Dockerfile: builds the entire solution inside Docker

# ---------------------------------------------------------------------------

docker:

stage: docker

tags:

- actuated-16cpu-32gb

- shell

needs:

- job: test

before_script:

- curl -fsSL https://get.docker.com | sh

script:

- echo "Building Docker image..."

- docker build -t nopcommerce:${CI_COMMIT_SHORT_SHA} -t nopcommerce:latest .

- docker images nopcommerce

So that one change clawed back 30-40s of build time.

Everything else was kept the same.

Our full table of results can be found below:

Actuated Runners

| CPU | RAM | Duration | vs GitLab Small | Notes |

|---|---|---|---|---|

| 16 | 32GB | 3m 59s | 60% faster | Shell executor for docker build |

| 16 | 32GB | 4m 42s | 52% faster | Docker executor |

| 8 | 16GB | 5m 01s | 49% faster | Docker executor |

| 4 | 16GB | 5m 06s | 48% faster | Docker executor |

GitLab's Hosted Runners

| CPU | RAM | Duration | vs GitLab Small |

|---|---|---|---|

| 4 | 16GB | 7m 21s | 25% faster |

| 2 | 8GB | 9m 50s | baseline |

Further work

Caching is the obvious next step.

Actuated runs a pull-through Docker registry on every server, so any image used in your CI pipeline only gets pulled from the Internet once, or when it changes, saving on bandwidth and time.

To take things further, we could look at caching layers from the docker build itself, either to the GitLab server, to a co-located S3 bucket, or an S3 server running directly on the host. We took this approach for Discourse's builds on GitHub Actions and saw further improvements.

To recap on differences from Kubernetes-based runners:

- No more managing Kubernetes clusters just for CI

- Keep your existing GitLab pipelines with minimal changes

- Run privileged tasks like

sudo,apt-get, Docker, and K3s without compromising security - Avoid Docker-in-Docker/VFS overhead with the shell executor

- Get faster build and job startup times on bare metal

- Start every jobs almost instantly in a fresh ephemeral microVM

- Adjust vCPU / RAM for every job through labels i.e.

actuated-1cpu-2gbup toactuated-32cpu-128gb

Your mileage may vary depending on where the bottlenecks are for your builds. If you've already tried the obvious things and feel like there's more improvement to be had, talk to us. We'd be glad to see if Actuated would be a good fit for your team.

]]>Actuated runners are 48–60% faster than GitLab's small hosted runner. Even the actuated runner (4cpu) outperforms the GitLab medium runner (4cpu) by over 2 minutes — a 31% improvement with matching specs.

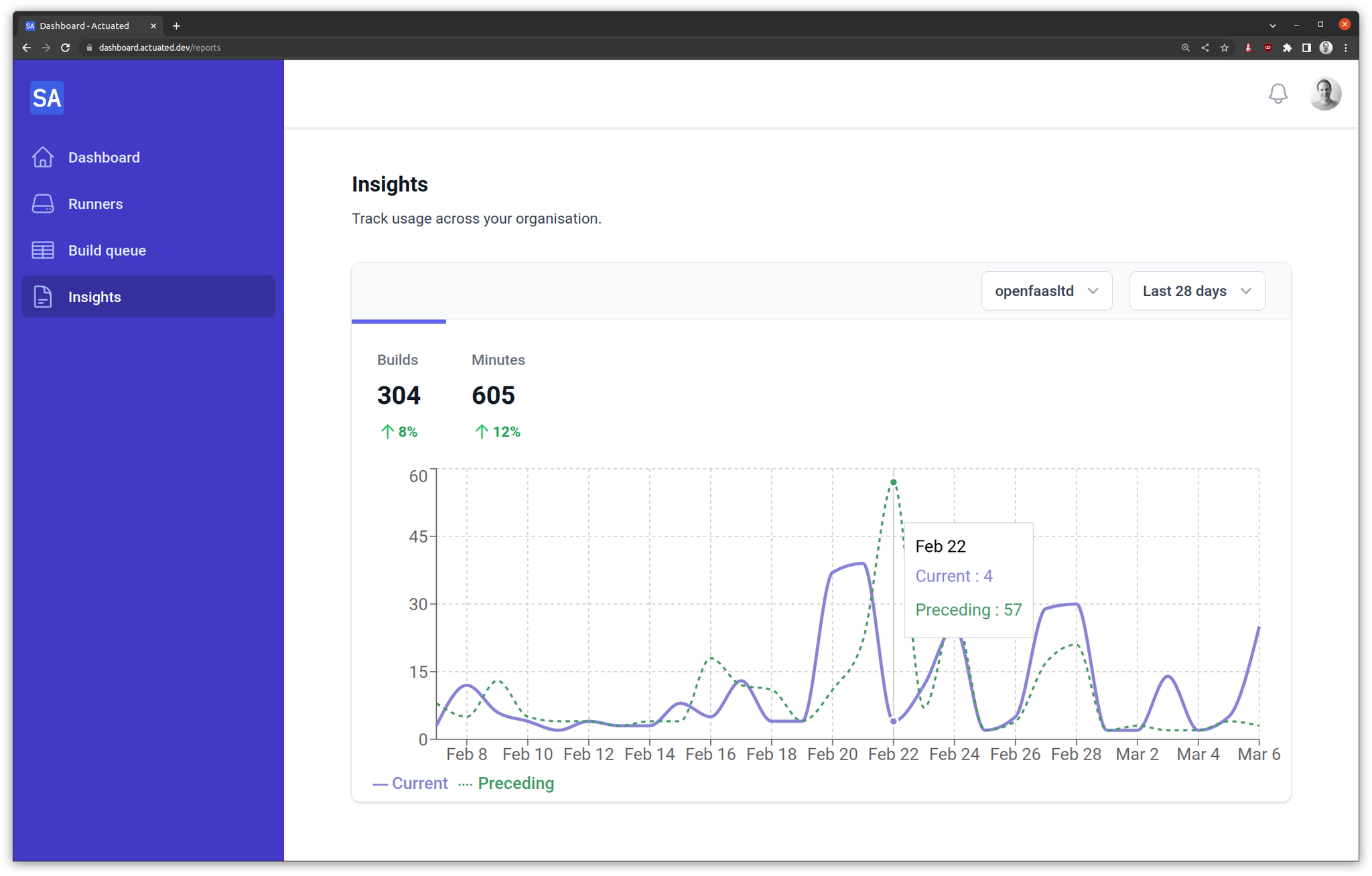

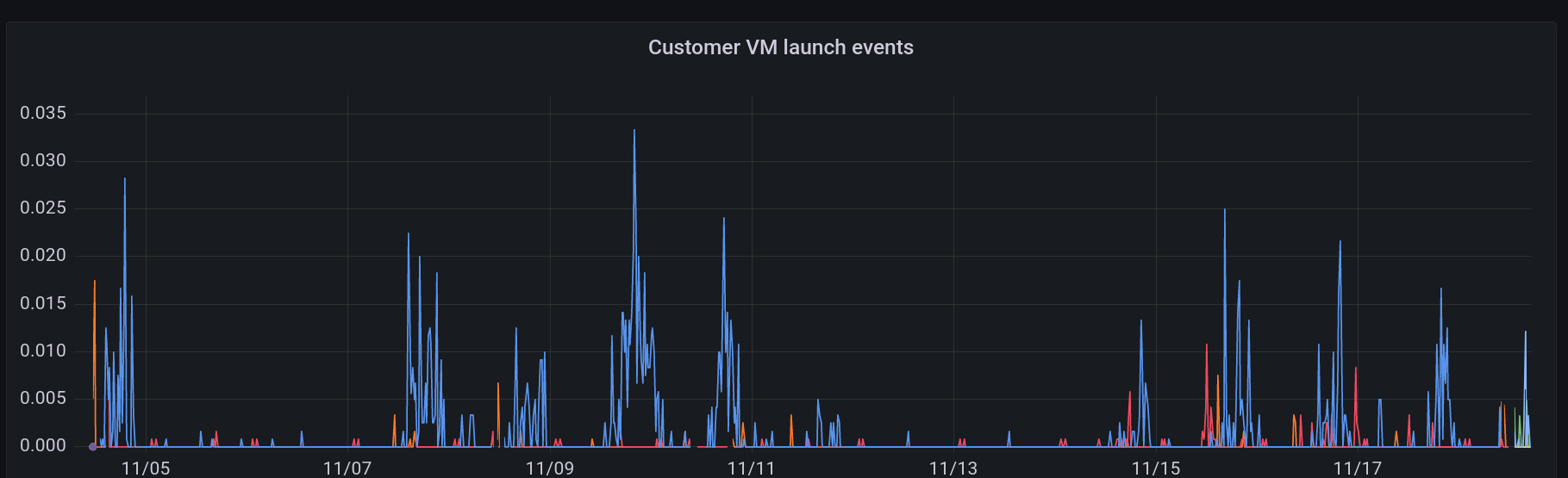

We're releasing our Q4 announcements early and we've got a lot to share with you.

- Auto Enrollment - a single bash command and your server is ready to use and specially tuned for GCP VMs with Nested Virtualisation

- Super speedy transparent cache - a cache that works with actions/cache and BuildKit - the only limit? local NVMe SSD

- Fractional CPU - run your builds on a fractional CPU i.e. 0.25 or 0.5 vCPU to lower your costs and improve efficiency

- Custom Billing - do you need high spikes of concurrency? In addition to our concurrency plans, you can reach out for plans charged by the minute

Auto Enrollment

Before

In the past, actuated customers would pick a server, load up an OS then do the following:

- Figure out the best free disk or partition to format for microVM snapshots

- Install and configure a Docker Registry Mirror/pull-through cache

- Install the actuated agent

- Create a DNS entry for the agent

- Send us an encrypted enrollment YAML file via email or Slack

Wait for us to add the host.

After

Now, with a simple snippet in your userdata, Terraform or Ansible playbook your server will be taking jobs in a minute or two.

After it's been offline for 15 minutes, it'll get cleaned up automatically.

Yes that means you can use Managed Instance Groups (MIGs) on GCP, or your own autoscaler to scale up and down as needed.

#!/bin/bash

curl -LSsf https://get.actuated.com | \

LICENSE="" \

TOKEN="" \

LABELS="gcp-ssd" \

HOME="/root" bash -

The LICENSE is sent via email at checkout, and the TOKEN is available on request after checking out. The TOKEN is known as an Account API Token and is used for onboarding new hosts, and for gathering Prometheus metrics

A Docker Registry Mirror/pull-through cache is installed by default, but if you give a DOCKER_USERNAME and DOCKER_PASSWORD to the script, it'll use your own Docker Hub credentials to pull images to greatly increase the pull limits.

When onboarding a recent customer they'd explained they were concerned about the steps to set up a new machine. We're happy to say that this is now a single command and your server is ready to use.

We're excited by how well the new auto-enrollment works and could see it leading to an autoscaler for GCP.

Savings with spot instances

One of our customers was excited to see this announcement because it means they can switch from a pre-provisioned c4-standard-16-lssd to spot instances, and save a considerable amount of money. They can also take their runner down over the weekend and bring it back any time they like with cron.

Auto Enrollment Demo videos

- Actuated Autoenrollment on Bare-Metal Cloud with Hetzner

- Actuated Autoenrollment on Nested Virtualisation (DigitalOcean) - the same approach can be used for GCP with Terraform, Ansible, or your own custom binary

Super speedy transparent cache

This announcement is a close second favourite for us behind Auto Enrollment.

One of the number one complaints about GitHub's hosted cache is that it's slow, especially from self-hosted runners.

We've always offered the ability for you to run an S3 cache directly on your servers, and instructions on how to use forked caching actions like testpkg/actions-cache which can target S3 instead of the proprietary GitHub cache.

But, this also involved manual setup and configuration of the cache.

The new approach is completely transparent to you.

actions/cache- will now use the local cache by default- BuildKit - will now use the local cache by default

setup-node,setup-python,setup-java,setup-ruby,setup-go- will now use the local cache by default

How does it work?

All requests to GitHub's caching servers (and only those requests) are intercepted and redirected to the local machine. No changes to your workflows are required.

Only caching requests are redirected and intercepted, all other traffic is passed through unmodified. This code runs on your server, and our team has no access to any of your traffic. "Super speedy transparent caching" to coin a term, will be opt-in. And the more manual approach (which is just as fast, just less convenient) will still be available.

Rough speed tests:

- GCP c4-standard-4 - Saving cache ~ 100MB/s

- AMD Ryzen™ 9 9950X3D / Hetzner AX102 - 276MB/s read / 478.9MB/s write

- Intel N100 - 89.9MB/s read / 155.7MB/s write

When using a Hosted Cache on a Hosted Runner, cache saves and restores can often throttled down to 1-10MB/s.

When using a Hosted Cache with a self-hosted runner, these figures are often much lower.

Fractional CPU

A DigitalOcean VM with 2x 0.25 vCPU actuated runners running jobs in parallel.

GitHub recommends a minimum of 2 vCPU and 8GB of RAM for its Runner which is written in C# and undergoes a Just In Time (JIT) compilation step when it starts up.

That said, since we introduced Profiling in the Actuated Dashboard, customers have noticed they can get away with much less than that, as low as actuated-1cpu-1gb.

Now, what if you have 100 jobs queued up which all perform basic automation tasks on PRs, issues, and repos?

At 1 vCPU each, a GCP c4-standard-16 could run around 16 jobs at once, but if you don't mind the extra time:

runs-on: actuated-0.25cpu-1gb- will run 64 jobs - that's 4x the amount.runs-on: actuated-0.5cpu-1gb- will run 32 jobs - that's 2x the amount.

We're also adding fractional RAM support with 512MB and 750MB being available in addition to the existing full numbers of RAM.

In practical terms, we ran a benchmark on DigitalOcean using a 4vcpu-8gb machine which is of course much smaller than we'd ever recommend for production use.

Installing stress-ng via apt took 7s with a VM with with actuated-1cpu-1gb, but when throttled to actuated-250mcpu-1gb, it took 26s.

name: stress-ng

on:

workflow_dispatch:

jobs:

stress:

runs-on: actuated-250mcpu-1gb

steps:

- name: Install

run: sudo apt install stress-ng -y

- name: Stress

run: stress-ng --cpu 1 --cpu-load 100 --timeout 2m

We have to remember that this system is configured to be multi-threaded - it uses systemd as its init, and runs the Actions Runner, on top of any other steps you need. So while 0.25 vCPU is not going to be as fast as 1 vCPU, it's still perfectly usable for many workloads.

What does this mean?

You get bin packing - the ability to run many more concurrent jobs, but at the cost of lower performance for those jobs.

Should you use it for a production build or a critical Terraform deployment? Probably not.

But if you're interacting with GitHub events, running linters, or bash scripts via a schedule - fractional CPU can save you a lot of money.

Custom Billing for large teams with spiky workloads

This year, we revisited the pricing for actuated and now offer two plans: Concurrency based plans - from 250 USD / month and Custom Billing.

Concurrency based plans

Servers: no limit Minutes: no limit vCPU: no limit RAM: no limit

Charges: maximum concurrent builds

This mode is a great place to start because the pricing is flat-rate and predictable. It never needs to change or spike, even if you have a large backlog to run through.

Our pricing calculator shows how easy it is to save money compared to GitHub's hosted runners, especially once you start needing more than 2x vCPU for jobs.

One customer told us they were running 36,000 builds per month on 4 vCPU Hosted Runners, costing them 1,440 USD per month.

With actuated's pricing calculator, that works out at roughly 1/2 of the price with a 10 concurrent build plan.

If they upgraded to 8 vCPU hosted runners their bill would have been 2,880 USD per month with GitHub, and 4x cheaper with actuated.

Custom Billing

With Custom Billing, we'll do our best to work out a plan that scales with your organisation's needs.

So if you ran 3,600,000 minutes per month, that'd be $28,800 USD per month on GitHub's smallest 2vCPU machines, and $57600 USD per month with a 4 vCPU runners.

Now clearly, if you also needed around 400 concurrent builds - actuated's concurrency based plan would not scale, so we'd work out a factor that lets us both grow together whether that's based upon:

- total minutes,

- total servers,

- or a graduated concurrency-based pricing model.

Custom Billing is available for any customer that needs more than 50 concurrent builds per month.

Wrapping up

On top of our previous announcement this month Actuated for Jenkins, we're excited to be able to offer you a more flexible and scalable way to run your CI/CD workflows.

You can be running jobs within a few minutes:

- Checkout on the pricing page - either book a call to talk to us, or go 100% self-service (both come with access to Slack during business hours)

- Deploy a bare-metal server on Hetzner Robot for the best price/performance ratio, or launch a VM on GCP, Azure, or Oracle Cloud with Nested Virtualisation enabled

- Obtain your Account API Token, and run the auto-enrollment script

- Change the

runs-on:label to i.e.actuated-4cpu-16gband start running jobs

Want to learn more? Talk to us on a call.

You may also like:

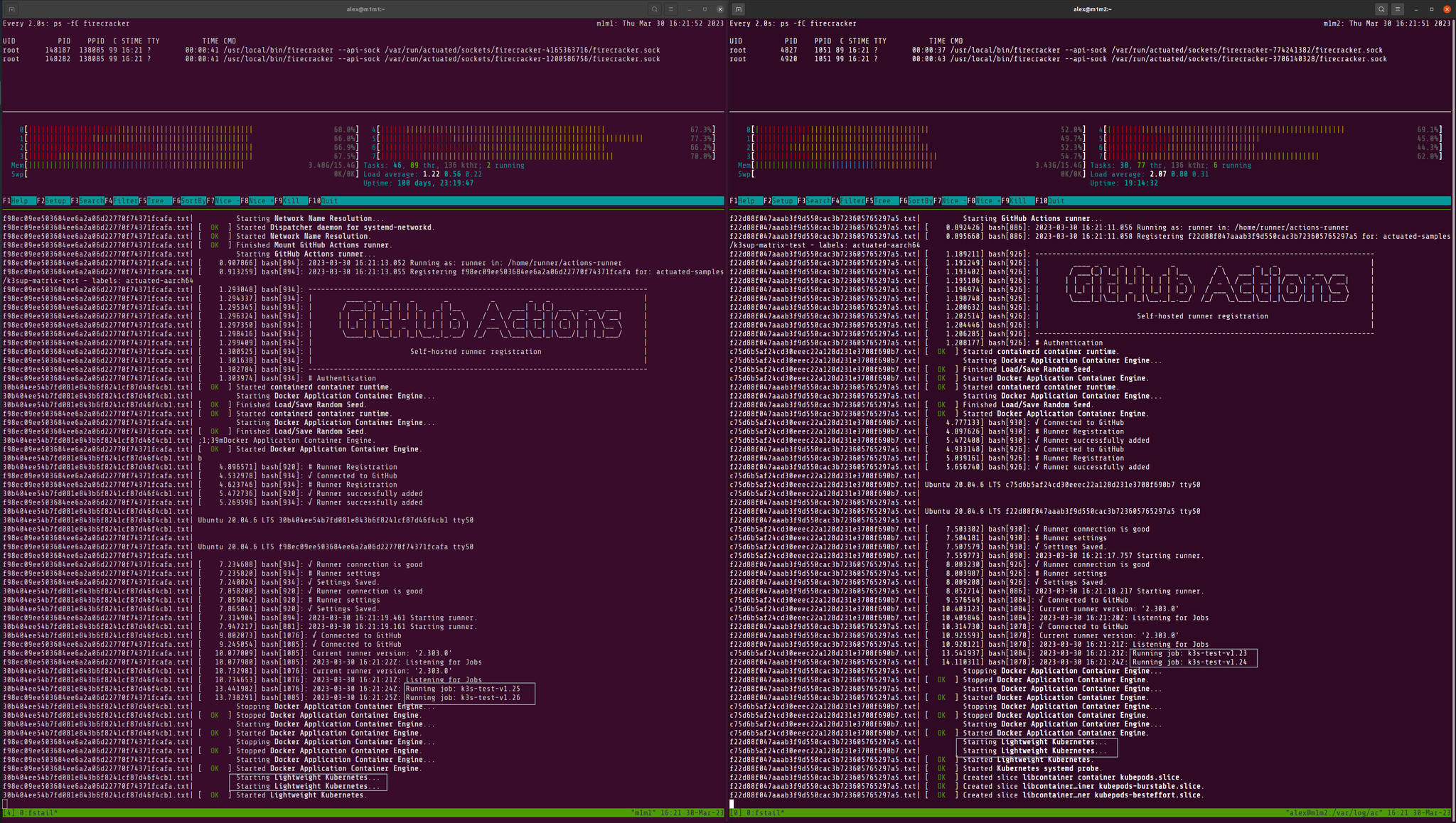

]]>In this post, we'll introduce actuated's new Firecracker plugin for Jenkins and why you should consider it over the existing plugins for Docker, Kubernetes, EC2, or GCE.

To say that Jenkins is a staple in the CI/CD world would be an understatement. This project was first released under the name Hudson in 2004 as a side project by Kohsuke Kawaguchi while working at Sun Microsystems. It has since evolved into one of the most widely used automation and build tools in the industry.

Just as when the World Wide Web was in its infancy, TLS termination and encrypted messages were the stuff of fiction - so were highly privileged daemons like Docker, and specialised tasks like building and loading Kernel modules into a build environment. Build environments were rarely immutable, ephemeral or hermetic.

In 2025 there are now a range of ways to make the build environment for Jenkins known as a "slave" or by more modern terminology a "runner" - ephemeral and less prone to side-effects. Whilst not natively implemented in Jenkins, the Docker, Kubernetes, GCE and EC2 plugins all provide a way to launch a build environment that is isolated from the Jenkins master and other builds.

But there are limits and tradeoffs to these approaches.

Kubernetes Plugin

I was recently speaking to a friend at jFrog which has a large self-hosted instance of Jenkins. He told me that using the Kubernetes executor, there were delays of between 1 and 5 minutes for each job, and that Pods had to run in privileged mode to allow Docker-in-Docker builds. This is a significant security risk, and one that many organisations are not comfortable with.

The Kubernetes plugin is inherently vulnerable to privilege escalation attacks when using a Privileged Pod or containers with root.

Scaling: Can request new nodes as required via Cluster Autoscaler, but this can add 1-2 minutes to launch time. Speed: Slow, in real world scenarios this can take 1-5 minutes to launch a Pod. Security: Medium if no Pod is allowed to run as root, or access Docker in any way. More usual rating is Low - all Pods run as root or a privileged Pod.

In high concurrency scenarios - Kubernetes rate-limits Pod launches. I just got off the phone with a customer who regularly launches 400-500 concurrent builds and couldn't understand why GitHub's Kubernetes solution could take up to 10 minutes to schedule all of the builds.

The readability of Kubernetes pipeline syntax is challenging and custom container images are required, which need to kept up to date (something that many teams struggle with).

podTemplate(

agentContainer: 'maven',

agentInjection: true,

containers: [

containerTemplate(name: 'maven', image: 'maven:3.9.9-eclipse-temurin-17'),

containerTemplate(name: 'golang', image: 'golang:1.16.5', command: 'sleep', args: '99d')

]) {

node(POD_LABEL) {

stage('Get a Maven project') {

git 'https://github.com/jenkinsci/kubernetes-plugin.git'

container('maven') {

stage('Build a Maven project') {

sh 'mvn -B -ntp clean install'

}

}

}

stage('Get a Golang project') {

git url: 'https://github.com/hashicorp/terraform.git', branch: 'main'

container('golang') {

stage('Build a Go project') {

sh '''

mkdir -p /go/src/github.com/hashicorp

ln -s `pwd` /go/src/github.com/hashicorp/terraform

cd /go/src/github.com/hashicorp/terraform && make

'''

}

}

}

}

}

Docker Plugin

If you've ever touched Jenkins for work, then you're likely familiar with the Docker plugin. It can either create a container for each build, or use a long-running container as a build environment. The former is more secure, but can be slow to start up, especially if the image is large or needs to be pulled from a remote registry. The latter is faster, but can lead to side-effects building up over time and is vulnerable to malware, unintentional changes, and ransomware attacks

The Docker plugin is a misnomer, sharing a Docker Socket, running in Privileged mode, or exposing a TCP socket means there are no boundaries or isolation between the builds and the host.

Whilst working with Intel on their CI/CD infrastructure, an engineer shared the woes of trying to get Docker itself to work within a build slave due to incompatible settings between Docker on the host and within Jenkins.

Docker runs as root, with the socket mounted, or exposed over TCP (even worse).

Scaling: By default scales across only one host - difficult to cluster and use a dynamic pool of hosts. Security: Low. Docker is running as root, the socket is mounted or exposed over TCP.

The Docker Pipeline plugin is slightly less verbose than the Kubernetes plugin, but suffers from the same issue. These pinned images tend to get out of date quickly, and need to be maintained. Enterprise companies are also likely to customise them for their own needs further exacerbating the problem.

pipeline {

agent {

docker {

image 'maven:3.9.9-eclipse-temurin-21'

args '-v $HOME/.m2:/root/.m2'

}

}

stages {

stage('Build') {

steps {

sh 'mvn -B'

}

}

}

}

EC2 / GCE Plugins

These plugins can run in one-shot or reusable modes. In the one-shot mode, you get a new VM launched which lasts for just one job, but it has a tradeoff. It takes at least 1 minute to launch the most stripped down minimal VM on AWS EC2, so job queue times are likely to be high. In reusable mode, the VM is kept alive for a period of time, and can be reused for multiple jobs. This is faster, but can lead to side-effects building up over time making it as bad as the long-running Docker container approach.

Scaling: If set to one-shot mode, a new host is created for each build 1-2 minutes of lead time. In reusable mode (default) a host is reused for multiple builds until it becomes idle and can then be terminated. Speed: Slow - at least 1 minute to launch a minimal VM. Security: (If configured for one VM per job) High. VMs are isolated from each other, and can be launched with minimal privileges. Low if reused. Configuration: Complex - requires managing AMIs, SSH keys, and network configuration. Many configuration options for the plugin to get it to work "right".

Summing up issues with existing plugins

- Slow to boot fresh environments

- Likely runs as root, with a Privileged Pod or Docker socket mounted

- Side-effects build up over time

- Complex configuration

- In high concurrency scenarios - Kubernetes rate-limits Pod launches

- Difficult to maintain custom images and infrastructure required for the plugins to work

Beware of the copious amounts of guides on sites like Medium.com and Dev.to that encourage you to take shortcuts and set up long-running, reusable build slaves. It's not 2004 anymore, and this approach is fraught with risk.

For a deeper dive into why the CNCF and Ampere choose Actuated and Firecracker for building OSS projects over GitHub's own supported solution, read: How secure are containers & Kubernetes vs. microVMs for self-hosted CI?.

A Next-Gen Approach

Compared to the approaches above, which all feel like retrofits to an architecture built in the early 2000s, Firecracker is a modern, lightweight virtualisation technology that was open-sourced by AWS in 2018. It is designed to run serverless workloads and container workloads with minimal overhead, and provides strong isolation between workloads.

Pictured: The Jenkins plugin for Slicer makes two REST calls over HTTP to start up two VMs for the requested builds.

The CNCF and Ampere Computing choose Actuated over Kubernetes

If you've heard of our work with actuated, then you'll know that the CNCF and Ampere Computing partnered to choose Actuated instead of GitHub's own Actions Runtime Controller (ARC) to run GitHub Actions for top-tier CNCF projects needing Arm compute. Over a period of 18 months, over 3 million CI jobs were run for Open Telemetry, runc, containerd, etcd, and many others.

Why? Primarily, ARC means managing a Kubernetes cluster with highly privileged Pods, so that common tools like Docker can be used in CI jobs. It has limitations to what will run - so you can't build and load a Kernel module like you can in Firecracker. You can't run a Kubernetes cluster to test your images - not safely, and not quickly. Docker in Docker relies on the VFS plugin aka "native snapshotter" which is up to 5-10x slower than overlayfs.

Firecracker has a headline of being able to launch a microVM in 125ms, but anyone who has used it for real world tasks knows that it'll be a good 1s to boot up a full build image with systemd and Docker preinstalled. Not at all shabby compared to the alternatives explored above.

So Firecracker, or microVMs more broadly give us:

- Full isolation of every build

- Fast start up time - 1s even with a bloated build image containing systemd, Docker, and other common build tools

- Run Docker and Kubernetes as root without risk

- Run apt-get, yum, dnf, zypper, etc with no side-effects

- Build, and load Kernel modules without risk or compatibility issues with the host

- Run untrusted code safely

Introducing the Slicer Plugin for Jenkins by Actuated.com

Instead of adding Jenkins support directly to actuated, we took a slightly different approach. We span out the internals of actuated into a general purpose VM orchestrator tool called SlicerVM.com. Then, we built a native Java plugin for a Cloud implementation just like the EC2, GCE, and Kubernetes plugins that launches microVMs on demand.

This was not a task for the feint of heart - for one it requires extensive use of Java, and two many undocumented and mysterious Jenkins-specific APIs, which run into race conditions and other oddities.

So for our friend working at an enterprise company, where Jenkins is firmly rooted and likely to be just as entrenched within the next 5 years, this plugin provides a way to run builds in microVMs with the mentioned benefits of lower queued times, running privileged commands the normal way - apt/dnf/yum/zypper, Docker, Kubernetes, etc.

That's enough about how it works and why it's better, let's see it in action on YouTube:

To get started, you need one or more machines capable of running KVM, with a Linux OS installed. We recommend Ubuntu Server LTS, however other RHEL-like operating systems also work.

You can use bare-metal in the cloud (i.e. Hetzner), or within your own datacenter, or within a VM where nested virtualisation is available i.e. VMware, OpenStack, or Azure, GCE, DigitalOcean, Oracle Cloud, etc.

For teams that are only able to procure from AWS, the options for bare-metal are a bit more limited, but we are starting work to make KVM-PVM more broadly available to our customers so you can run KVM with pagetable isolation on existing EC2 instances.

Example pipeline builds

Pipeline builds need no specific changes other than a build agent label, so that the plugin knows when a new microVM is required.

Example build using Docker:

pipeline {

agent { label 'slicer'}

options { timeout(time: 2, unit: 'MINUTES') }

stages {

stage('Build') { steps { sh '''

sudo systemctl start docker

docker run -i alpine:latest ping -c 4 google.com

''' } }

}

}

Example End to End test using Kubernetes:

pipeline {

agent { label 'slicer'}

options { timeout(time: 2, unit: 'MINUTES') }

stages {

stage('Build') { steps { sh '''

export PATH=$PATH:$HOME/.arkade/bin

arkade get k3sup kubectl --progress=false

export KUBECONFIG=`pwd`/kubeconfig

k3sup install --local --no-extras

k3sup ready --attempts 5 --pause 100ms

kubectl get nodes -o wide

kubectl get pods -A -o wide

''' } }

}

}

We used K3sup to setup the cluster, but kubeadm, KinD, minikube, K3d, minikube, Openshift's CRC, or any other local Kubernetes solution will work in exactly the same way.

Just like with actuated, custom VM sizes can be allocated without creating predefined sets: slicer-2cpu-gb or slicer-8cpu-16gb for example.

You can also customise the image to pre-install Docker, the latest JVM version, or whatever tooling you're used to obtaining from your in-house golden images.

Next steps

To try out Slicer for Jenkins, sign up for a personal or commercial subscription at SlicerVM.com and let us know that you'd like to receive the plugin. Each server requires a seat, however there is no limit on how large you want to make your server, so one machine can run a significant number of concurrent builds.

If it's too early for you to embark on a self-service Proof Of Concept, then you can contact us for more a brief call and demo via Zoom. The form mentions GitHub Actions, so feel free to use the free text box to let us know you're interested in Jenkins support.

Glossary

What's KVM?

KVM is a Linux kernel module that allows the kernel to function as a hypervisor. It requires a CPU with hardware virtualisation extensions such as Intel VT-x or AMD-V. KVM can also run on many cloud or on-premises VMs where nested virtualisation is enabled.

What's KVM-PVM?

KVM-PVM is a patch led by Alibaba Cloud and Ant Group which allows KVM to run with pagetable isolation on CPUs without hardware virtualisation extensions.

What's Firecracker?

Firecracker is an open-source virtualisation technology that runs lightweight microVMs with minimal overhead. It was open-sourced by AWS in 2018 and is used to run AWS services like Lambda and Fargate.

What's actuated?

Actuated.com is a managed service for running GitHub Actions and other CI/CD workloads in Firecracker microVMs. You Bring Your Own Cloud (BYOC) and it handles the orchestration, scaling, image management, and security of your runners.

What's Slicer?

SlicerVM.com was spun out of actuated to provide a general purpose YAML or API-driven orchestration tool for Firecracker. You can use it launch one-shot tasks like CI runners, or long-lived services like Kubernetes, web-servers, databases, etc.

]]>We introduce Burstable CPU jobs which allow for a minimum amount of vCPU to be set for any job, but more is allocated if available.

We'll also give you a reminder of other smart labels for more advanced scheduling like the actuated-any- label which can be used to allocate work to x86_64 or arm64 depending on availability to improve overall efficiency and resource utilization.

As a tl;dr:

Before: actuated-32cpu-32gb - a job would remain queued until a machine with 32vCPU was available

After: actuated-24cpu-32gb-burstable - a job will take as many vCPU as are available on a host, but will run with fewer so long as 24 are available at a minimum.

Impatiently Building Kernels

This new feature solves a problem we ran into when building many variations of the Linux Kernel on a finite set of servers.

Our largest x86_64 server is an A102 from Hetzner which has 32vCPU and 128GB of RAM. It can produce a fully-featured Kernel in around 3min30s when all of the 32vCPUs are allocated to that single job.

So when we need to rebuild 4-5 variations of that Kernel, our jobs get queued up and run in serial.

Iterating locally on my AMD Ryzen 9 7950X3D 16-Core Processor, before pushing changes to GitHub for a production build.

Option 1 - Buy more servers

The simplest solution is to purchase additional A102 servers from Hetzner, however the needs of our small team are sporadic and low in volume. So they don't warrant having high-specification hardware sat idle 99% of the time.

Another option could be to buy extra hardware and offer it to actuated customers, but the main reason to use actuated is for private, dedicated, and predictable tenancy.

Option 2 - Lower the vCPU allocation

At the same time, we recently purchased an Acemagic F3A Mini PC which has 24vCPU which run at a clock speed that almost matches the A102.

We could simply lower the vCPU allocation so that every Kernel job can run on either the A102 or the F3A, but that would result in a performance penalty when building a single Kernel. That may not be noticeable in automated builds, but it severely impacts the developer experience when we have to iterate on new Kernel versions or find a missing CONFIG_ setting.

Option 3 - Burstable CPU Jobs

The third option gives us the best both worlds. We get to specify a minimum vCPU amount i.e. 24 and some extra metadata, in this instance a -burstable label means that our scheduler will allocate additional vCPUs if they are available on a server during scheduling.

Old behaviour:

The job label is actuated-32cpu-32gb and can only run on the A102. The F3A is idle, and we have no parallel Kernel builds.

New behaviour:

The job label is changed to actuated-24cpu-32gb-burstable.

The first job runs on the A102, and subsequent jobs run on the F3A.

If we had a third mini PC enrolled into our account with i.e. 12vCPU, we could change the label to actuated-12cpu-32gb-burstable and have a maximum of three Kernel builds running at any one time.

Wrapping up

The new burstable feature works for both x86_64 and arm64 builds. It's a convenient way to squeeze more out of your existing servers whilst only trading off performance during busy times.

If you're interested in the Acemagic F3A, it's available on their UK and US websites.

ADLink Ampere Developer Platform pictured with a GPU workstation and Mac Minis running Linux

Servers or mini PCs running in your datacenter, in an office cupboard, or under your desk can still be accessed via actuated.com through private peering.

Did you know about the actuated-any label?

In a similar vein to Burstable CPU, in 2023 we introduced an actuated-any prefix for jobs that could run on either x86_64 or arm64 architectures, allowing for even greater flexibility in job scheduling.

This is ideal for automation and jobs which use interpreted languages like Python or Node, that can run on either architecture without modification.

name: check-pull-requests

on:

push:

branches:

- master

- main

workflow_dispatch:

permissions:

actions: read

jobs:

check-pull-requests:

name: check-pull-requests

runs-on: actuated-any-1cpu-2gb

steps:

- uses: actions/checkout@v5

- name: Check Pull Requests in Repository

run: |

echo "Checking for open pull requests..."

npm run check-pull-requests

We take at how to implement egress filtering in a CI environment, without relying on a solution that runs within the job itself. This approach is particularly useful for preventing data exfiltration and ensuring that sensitive information remains secure.

Introduction

In a time where high-street names, large retailers and airlines are regularly experiencing global outages due to cyber attacks, organisations are asking themselves how they can better protect their data and systems.

The rise of malware, cyber attacks, and ransomware are becoming a major concern for organizations - and rightly so. A single successful attack can lead to significant financial losses, reputational damage, and legal consequences. A notable example in the UK is the brand M&S, which ii likely to suffer disruption to last until July and will cost £300m (approx 400m USD).

It's not just big brands - malware is increasingly being included in the software supply chain such as the xz incident, malicious packages in PyPy, and npm.

Typically, we would consider a language like Go to be a safe choice, however it is also not immune and has been a target for data exfiltration attacks and more recently disk wiping.

So what's the answer? There are many - from runtime security measure, to static analysis, to careful control over what software and base images are used within a job.

But many of these measures rely on software executing within the job itself, which means that there is potential for the job to be compromised before the security measures are applied. This is where egress filtering with actuated comes in.

Egress Filtering with Actuated

Actuated is a CI solution that provides a secure and ephemeral microVM for each job whether that's for GitHub.com or GitLab CI users.

With a container-based CI solution, you may typically need to run a Kubernetes cluster, and then run a set of long-lived containers within that, which can be compromised over time by side-effects of previous job runs. You may consider using a tool like Istio to implement egress filtering, but it will often get in the way of your other workloads or control-plane components within the cluster.

Not to mention the complexity of setting up and maintaining such a system, and the inherent risk of running containers as privileged or mounting a Docker socket.

The Actuated agent is a lightweight process that runs on a Linux host and is responsible for managing the lifecycle of the microVMs. It provides a secure environment for each job, ensuring that the job runs in isolation and cannot access any sensitive information or resources outside of its own environment.

The Actuated agent starts a microVM for each job, which is isolated from the host and other jobs. All network access has to go through a special Linux bridge.

All network traffic from the microVM is routed through a network bridge, which means we can apply policies and control the traffic that is allowed to leave the microVM. This allows us to implement egress filtering without relying on software running within the job itself.

Example of HTTPS filtering for an allowed domain:

Pictured: HTTPS request is allowed to egress when it matches the whitelist, otherwise it is blocked. The agent also applies DNS filtering to prevent data exfiltration.

For a domain that's not in the list:

Pictured: The request to a domain which is not on the whitelist is blocked by the agent, preventing the malware from accessing the remote endpoint.

The whitelist

The most practical form of filtering bans all outgoing TCP and UDP traffic, then allows only a sub-set of whitelisted domains to be accessed, ideally without involving a costly enterprise-grade intercepting HTTPS proxy server such as Cisco Umbrella or Zscaler.

Egress filtering is not for the feint of heart, it requires careful consideration of the traffic that is allowed to leave the microVM - and a basic GitHub Actions job that only prints "Hello World" may genuinely need to access up to 10-20 different domains just to run and publish its built-in telemetry.

Pictured: a build that starts a K3s cluster, something which is not possible on Kubernetes without resorting to privileged containers or mounting the Docker socket.

A practical whitelist may look like this for a very minimal build:

/etc/actuated-egress/whitelist.yaml

allowed_domains:

- api.github.com

- gitlab.com

- github.com

- google.com

- archive.ubuntu.com

- security.ubuntu.com

- '*.actions.githubusercontent.com'

- checkip.amazonaws.com

- '*.blob.core.windows.net'

- raw.githubusercontent.com

- codeload.github.com

- objects.githubusercontent.com

- deb.nodesource.com

- download.docker.com

- trafficmanager.net

- a2z.com

# Custom entries for our usage

- get.arkade.dev

- openfaas-live.actuated.dev

- "*.o6s.io"

- "*.openfaas.com"

To build the list, you can set actuated to run in audit-only mode.

/etc/default/actuated-egress

AUDIT_MODE="true"

COREFILE="/etc/coredns/Corefile"

WHITELIST="/etc/actuated-egress/whitelist.yaml"

LOGS_PATH="/var/log/actuated-egress"

Instead of blocking traffic not present within the whitelist, it will log the domains accessed to a file in: /var/log/actuated-egress.

You can then run a well-known job, gather the list of domains, assess them one-by-one and add any required.

Then flip the AUDIT_MODE to false and restart the agent - at this point, you can try to run the job again to see if it works as expected.

80/20 principle

Whilst you could purchase a costly web-filtering solution such as the ones mentioned above to look at layer 7 traffic - this requires that every process in the job is proxy-aware, and can be configured to use a HTTP proxy. That's not often the case with Linux-based software, and it is a serious pain to configure.

Instead, we can rely on actuated's transparent HTTPS proxying and DNS filtering to provide a solid level of protection against data exfiltration, without overwhelming the user with configuration.

First, all traffic outgoing from the VM is blocked and denied by default.

Then, all traffic to port 80 and 443 is transparently redirected to actuated's agent, which applies the whitelist we've already explored.

Next, ICMP, UDP and other non-TCP traffic is blocked by default, and then DNS traffic is again, re-routed to actuated's agent, which applies the same whitelist to the DNS queries.

This means, that if an npm package, PiPy package, or Go module tries to access a domain that is not on the whitelist to exfiltrate a credential or source code, it will be blocked by the agent.

Conclusion

When you combine a ephemeral microVM with egress filtering, you can create a secure CI environment that is resistant to many kinds of data exfiltration and malware attacks.

Unlike with containers and Kubernetes, the one-shot microVMs used by actuated have their own guest Kernel so they can run Docker, Kubernetes, even custom Kernel modules without any risk to the host or other jobs.

Then a default deny for all outgoing network traffic, combined with restrictive whitelisting of domains applies the 80/20 principle to egress filtering, allowing you to run jobs without needing to configure a complex proxy or web-filtering solution.

If you'd like to talk to us or find out more: contact us here.

You can watch a demo below of the filtering in action:

]]>You've landed here because you're looking for a secure way to run self-hosted CI runners. Our solution isolates CI jobs in one-shot, ephemeral microVMs, but you might be wondering: Why microVMs? Why not just install a self-hosted runner on a VM or use Kubernetes?

These are valid questions. If you've never considered the security implications of these setups, take a moment to see why microVMs offer a secure alternative.

As of today, we've processed 4.4 million minutes of CI jobs for commercial and open-source CNCF projects, all running in securely isolated microVMs that boot in under a second and are destroyed immediately after the job completes. Some customers use a pool of servers that we manage as part of the subscription, and others have their own dedicated bare-metal hardware rented from cloud providers like Hetzner or Equinix.

This article will walk you through the risks of common self-hosted CI solutions and why microVMs are the safest choice.

- What does GitHub have to say about self-hosted runner security?

- The side effects of an uncontained self-hosted runner

- Kubernetes and Docker are secure and ephemeral right?

- So what's the alternative?

- Wrapping up & further resources

It's not the first time I've spoken on this topic, you'll find a recording from a conference talk I gave, and a link to the original announcement over two years ago.

What does GitHub have to say about self-hosted runner security?

According to Self-hosted runner security:

We recommend that you only use self-hosted runners with private repositories. This is because forks of your public repository can potentially run dangerous code on your self-hosted runner machine by creating a pull request that executes the code in a workflow.

This is not an issue with GitHub-hosted runners because each GitHub-hosted runner is always a clean isolated virtual machine, and it is destroyed at the end of the job execution.

Untrusted workflows running on your self-hosted runner pose significant security risks for your machine and network environment, especially if your machine persists its environment between jobs. Some of the risks include:

- Malicious programs running on the machine.

- Escaping the machine's runner sandbox.

- Exposing access to the machine's network environment.

- Persisting unwanted or dangerous data on the machine.

The side effects of an uncontained self-hosted runner

My earliest memory of the self-hosted runner went a little bit like this.

- OK that was easy, what is everyone complaining about?

- Runs my first job - oh that apt package is missing, let me install it..

- Goes red again - oh yeah, better add that too

- And that one

- 50 minutes into the build and something else is missing 🤦♂️

Just installing all the dependencies into your VM that are in the hosted runner, to get some semblance of parity is an arduous task and an ever moving target.

Next, you create a KinD cluster and it goes well for the first run.

The same job that passes on a hosted runner now fails because the KinD cluster is still in place from the previous run:

% kind create cluster --name e2e

ERROR: failed to create cluster: node(s) already exist for a cluster with the name "e2e"

So you create a random name for the KinD cluster. Genius! 👩🔬

But now your Docker library is full of orphaned KinD clusters and you run out of disk space.

I could go on, but I won't.

Not to mention, this self-hosted runner can only run on job at a time, so you're wasting a lot resources.

When it crashes, you have to duplicate all the work you did to get it running, on another machine.

And you have zero security, no form of isolation, and any job that runs, whether done with malice, or misguided good intent, or an accident can leave the environment compromised.

Kubernetes and Docker are secure and ephemeral right?

Docker is great, Kubernetes is a solid platform, however neither are suited to running CI workloads.

You cannot build, then load an eBPF module into a Kernel using a self-hosted runner in a container.

You cannot safely:

- run Kubernetes itself in a Kubernetes Pod

- build a container image

- run a container image you've built.

In order to do these things, you have to install Docker into your container/Pod, and start it up. Docker is a daemon that runs as root, and requires host-level privileges in order to do its work.

There are two ways Actions Runtime Controller and GitLab's Kubernetes Controller go about this:

-

Mounting a Docker Socket.

Docker has to be running on the host for the container or Pod. You expose the socket via a bind-mount into the container.

Any CI job that runs can take over the host, and worse, can probably make privileged calls into the Kubernetes cluster, and exfiltrate any secrets such as cloud access keys.

-

Running as a Privileged container

When you run a Pod as a Privileged container, a separate Docker Daemon starts up. It does not share the daemon with the host, however it gives a false sense of security.

Whilst you now have two docker daemons running, the one running within in your container has to use Virtual Filesystem (VFS) - a slow, and expensive emulated filesystem that is required to support Docker inside Docker.

The Pod itself has a privileged runtime profile, which means: it has unrestricted access to the host's resources, including the ability to manipulate kernel modules, access devices, and interact with sensitive system-level functions. Running with these elevated privileges opens up significant security risks:

- Host Compromise: Since the container has broad access to the underlying host, it could potentially manipulate the host’s configuration, modify system files, or install malicious software.

- Kernel Exploits: If there are any vulnerabilities in the host kernel, a privileged container could exploit them to gain root access to the host.

- Device Access: Privileged containers can interact with devices on the host, such as block devices, USB devices, or network interfaces. This can lead to unauthorized access or data leakage.

- Increased Attack Surface: Privileged containers can perform actions that are generally prohibited in standard containers, such as changing sysctl parameters or configuring iptables rules, which expands the attack surface.

In short, Kubernetes and Docker are not secure for running CI workloads that require anything beyond basic user-space tasks. They lack the isolation and security needed for complex, multi-tenant CI environments.

So what's the alternative?

GitHub emphasizes that their hosted runners run in ephemeral, isolated virtual machines. But when you're managing your own runners on VMs or in Kubernetes, the environment is anything but ephemeral. Jobs can persist malicious code, leak secrets, and compromise the entire system.

GitHub-hosted runners execute code within ephemeral and clean isolated virtual machines, meaning there is no way to persistently compromise this environment, or otherwise gain access to more information than was placed in this environment during the bootstrap process.

Self-hosted runners for GitHub do not have guarantees around running in ephemeral clean virtual machines, and can be persistently compromised by untrusted code in a workflow.

Some people will start to look for how to run Docker without a Privileged Pod, without a Docker Socket Mount, without root, but all these solutions tend to be half-baked, and still involve using root somewhere along the line. User namespaces have come up a number of times, but are not compatible with every kind of Kernel, and user workload.

There’s no simple workaround here. Tools like Kaniko, Buildah, and BuildKit do exist, but they introduce complexity and, at some point, often require root access or privileged operations—just not where you might expect it. Linux User namespaces were meant to address these issues, but they come with their own set of challenges, like compatibility with certain workloads and kernels, and they aren’t a universal solution.

Each of these tools focuses primarily on building containers, not running them in a secure way. When you need to run Kubernetes, K3s, or even Docker within a CI job, you're effectively back at square one. These tools try to patch up container isolation issues with band-aid fixes, but none of them offer the clean separation and security needed for running truly secure CI jobs.

On top of that, they frustrate the developer experience. Teams want to get things done with familiar tools — most notably, Docker - which they use in local development. By introducing these alternative tools, you add friction, slow down the workflow, and ultimately, you’re still left with a suboptimal security model.

Over two years ago, even as a staunch supporter of Kubernetes, I finally realised that putting a square peg into a round hole was simply not working, and that there was a better way.

Docker containers and Kubernetes Pods can be great for running multi-tenant workload, but this all changes the moment that they need host level privileges. And frankly, anything but trivial CI jobs tend to need a full Operating System.

VMs have a bad rap for being bulky, presenting a large attack surface, expensive to license, difficult to automate, and in the cloud world, slow to boot up.

That's where microVMs come in. Unlike traditional VMs or containers, microVMs:

- Boot in under a second.

- Run from lightweight root filesystems.

- Provide true API-driven automation with minimal overhead.

- Strip out unnecessary devices and features, focusing only on what's needed to run a job securely.

With microVMs, we get the isolation and security benefits of VMs without the bloat. They don’t need UEFI or BIOS, and they limit the attack surface dramatically.

The two best known solutions are:

- Firecracker by the AWS team - focused on short-lived workloads

- cloud-hypervisor (a fork of Firecracker) with additional support for PCI devices, and long-lived workloads

In Summer 2022, I built a prototype to run self-hosted runners with GitHub Actions, and reiterated some of the issues we discussed today. By October, we'd launched a Pilot and ran tens of thousands of securely isolated jobs for our first customer in just a few days.

Enter actuated 🤘

— Alex Ellis (@alexellisuk) September 24, 2022

1) You set up a number of hosts with a bare OS and our agent binary

2) We run the control plane and start one-shot microVMs for every job

3) We managed the base VM image with all its tools

4) We schedule efficiently not to waste money or to exceed resources pic.twitter.com/Xn2dz77vad

I spoke at Cloud Native Rejekts just before KubeCon, to staunch Kubernetes users, and got a resounding round of applause. I think something clicked, people realised Kubernetes is a wonderful platform, but we need something different for CI.

There are downsides however. Working with microVMs needs low-level Linux expertise, and I'm not talking about the kind of insights you get from Googling or a ChatGPT session. To make secure and proficient use of them requires a deep understanding of Kernel configurations, how to debug them when something is missing, what makes up a root filesystem, Linux networking, cgroups, and so much more. And that's before you've even started building a UI dashboard, API and integration with a CI system like GitHub or GitLab.

The community support for Firecracker is very limited, since the maintainers are more focused on how AWS uses the technology, than furthering external adoption. For that reason we've have to do a lot of independent R&D, and leant into my past experience with Linux systems engineering from OpenFaaS, and inlets.

If you'd like to get a flavour of what it's like to run a microVM, you can try out my quick start: Grab your lab coat - we're building a microVM from a container.

Wrapping up & further resources

There are various reasons to consider self-hosted runners: cost optimisation, access to faster hardware, running on Arm hardware for multi-arch builds, and for the fastest possible access to private networks.

If you're considering using a self-hosted runner installed on a VM for an OSS project, just don't. The risks are obvious, and there is no up side. GitHub is very clear about the risks. You may get away with it for a limited time for a closed-source repository, but it is a lot of work keeping up, and coping with side-effects. Is this the secure environment that your customers would expect you to be using?

If you're considering using a Kubernetes-based solution, make sure that you only run tools that are safe like Python, Node, Go, without building or running any containers. You may be OK, if you have set up comprehensive network policies to prevent attacks to the internal network and any cloud metadata services. One of the first thing penetration testers look for is an unsecured cloud metadata endpoint, it can often be used to obtain access keys to your cloud account through IAM roles that are applied to the base host.

When you install GitLab through their helm chart, their warning is clear:

You've installed GitLab Runner without the ability to use 'docker in docker'. The GitLab Runner chart (gitlab/gitlab-runner) is deployed without the

privilegedflag by default for security purposes. This can be changed by settinggitlab-runner.runners.privilegedtotrue. Before doing so, please read the GitLab Runner chart's documentation on why we chose not to enable this by default. See https://docs.gitlab.com/runner/install/kubernetes.html#running-docker-in-docker-containers-with-gitlab-runners

If you need to run a container, or build one, you really only have two safe options: hosted runners or a solution like actuated.

Hosted runners have come on a long way since we started a couple of years ago, however they are still 2-3x more expensive than actuated at higher volumes. The Arm support is still limited, if you need access to large datasets of LLM models, a VPN is simply too slow, and running a microVM next to the data will be night and day quicker.

If you're interested in trying out actuated, or hearing more, you can get in touch with me and the team here: Talk to us about Actuated

You can also find out more conceptual and technical details about actuated in the Frequently Asked Questions (FAQ).

See also:

]]>Over two years ago we registered the actuated.dev domain with the intention to fix various issues with self-hosted CI runners for GitHub Actions using microVMs.

It's been a long journey and microVMs are not for the feint of heart, and we've spent a significant amount of time building a scheduler, agent, Kernel, root-filesystem, dashboard and reporting to make it work well for CI workloads. microVMs remain a poorly documented and hard to use technology, so whilst basic things can be made to work in a short period of time, the most important workings have to be understood only through trial & error and experience.

I'll set out what we wanted to address in November 2022, the direction customer feedback has taken us, and what we're planning next.

What we set out to address

Looking back, we've addressed all the original concerns, and covered new areas too:

- Every build job runs in an isolated microVM

Why is this important? Unlike the standard self-hosted runner available from GitHub, an isolated microVM means that there can never be side effects left over between builds. One of the first problems we ran into with self-hosted runners prior to creating actuated, was side effects build up in surprising ways and causing flaky builds, and frustrating errors.

- Docker without the security concerns

When a self-hosted runner executes inside a container, then makes use of Docker, it usually involves mounting a socket from the host, running as a privileged container, or running as root. In each case, it's trivial to escape the container and gain full administrative access to the host. So if you're running Actions Runtime Controller (ARC) or GitLab CI's Kubernetes operator and make use of Docker in any way, beware. Not only can a supply chain attack steal credentials, exfiltrate data & code, run cryptominers, but it can also escape the container and gain full control of the host and possibly the Kubernetes cluster and wider network.

Some teams believe that having a "separate Kubernetes cluster for CI" solves these problems. It does not, it makes it harder to recognise when the CI system has been compromised, and since these will often run in the same cloud account, may make it possible to escalate privileges to the wider network.

- VMs without the boot-up time

Actuated microVMs are finely tuned, so that even with a fully isolated Docker daemon running, the system is ready within 1s and connecting to GitHub's control-plane. If you've ever used an autoscaling group on AWS ECS, you're probably more used to a 3-5 minute boot-up for a new VM.

- Feels like managed, but is still self-hosted

With actuated, we operate a managed control-plane, which means you don't have to think about GitHub Apps, bot accounts, rate-limits, what to do when GitHub has an outage. The control-plane receives notifications from your GitHub repositories, but has no access to the code or secrets. Only the GitHub runner software itself can checkout code and run jobs using GitHub's fine-grained security mechanisms, the same ones used for managed runners.

So what do you have to manage? You'll need to rent or provision a server with KVM (nested, or hardware) and then follow our guide to install the actuated agent, or install our SSH key and we'll use an automated process to do it for you. After that, you can manage your runners and build-queue via the dashboard and we'll monitor your servers via our central Grafana dashboard.

- Automated - Over The Air (OTA) updates

Our own CI system builds both the actuated agent, and the root filesystem used for CI builds for GitHub Actions and GitLab CI on an automated basis. These are pushed out over the network to agents, so you never have to worry about updating the self-hosted runner, or the software within the VM image.

For a case study from Calyptia (since acquired by Chronosphere.io), see: Scaling ARM builds with Actuated

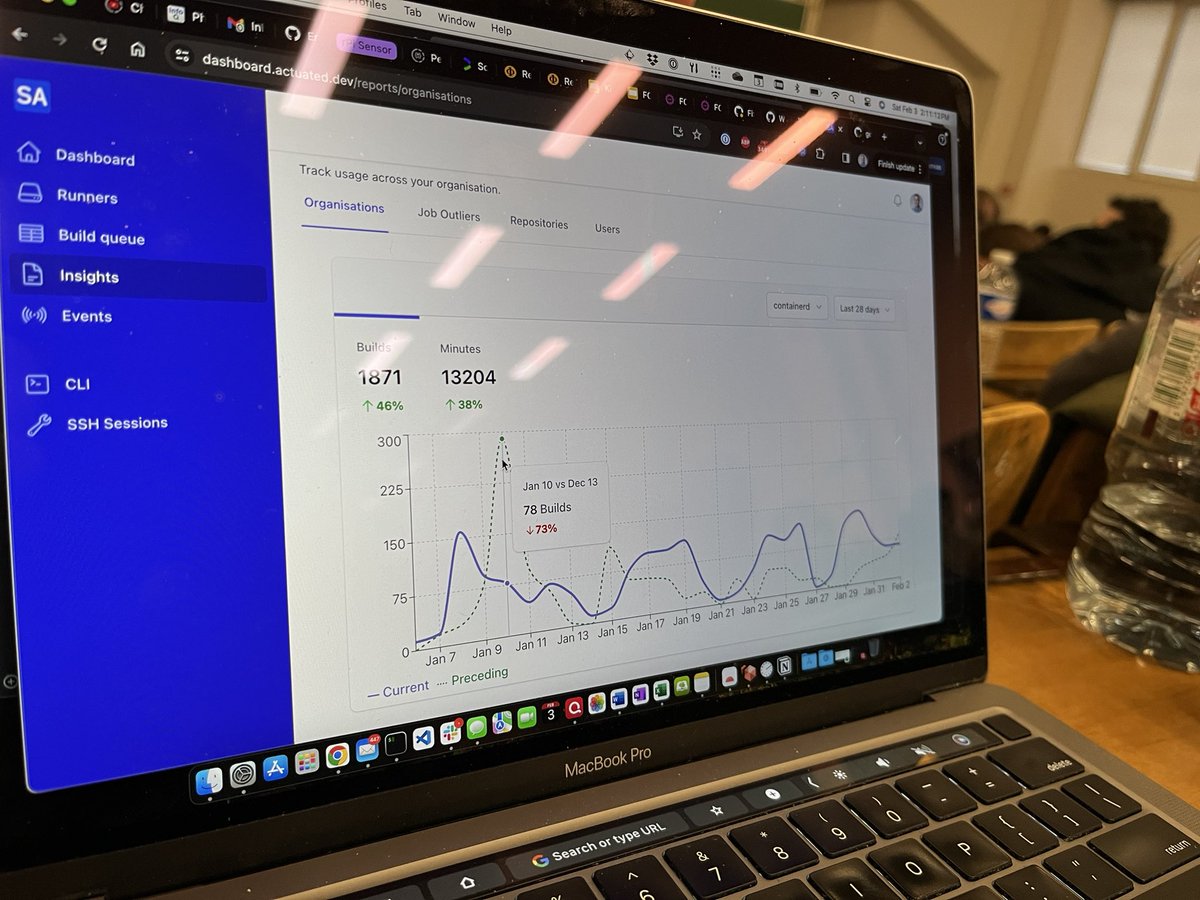

Predictable flat-rate pricing, with new burst pricing

The pricing for actuated was designed to be predictable and reasonable. If you run 100 builds or 1 million builds, you'll get charged the same amount, with concurrency being limited to whatever you decided fits your needs.

For teams with a large amount of minutes, or larger runners than the "standard" tier of GitHub Actions, this can result in a 2-6x cost reduction.

Even with flat-rate pricing, and a stable concurrency level of say 5 or 10 concurrent builds, there will be times where you need to cut a release, and want many jobs to run at once.

For those times, we listened to your feedback and introduced burst pricing. You can opt-in to add extra concurrent builds to your account, which are only used if there are queued jobs for a set period of time.

Pay only when needed: Let's say you hit your limit often, but are happy with some queueing. You can add an extra burst of 20 builds, but a queue time of 10 minutes. Then your extra concurrency will not get used until there have been jobs waiting for 10 minutes.

Clear the queue as quickly as possible: You could have a base limit of 20 builds, but a burst of up to 100 builds. You'll only get charged for what you go over on a particular day, and only if you go over your base limit. If you stay within 20 concurrent builds, you'll pay nothing extra.

See also: Burst billing and capacity for GitHub Actions

Server pricing & white-glove service

Today, most of our customers use pay per minute bare-metal hosted by a cloud provider. But our first pilot customer was a mid-sized start-up who loved to run their own servers, maintain their own infrastructure. They bought Dell servers from eBay, packaged them with new hardware, then had a team of 5 engineers on standby to refurbish and manage them.

Over time, we started to meet teams who wanted to outsource the server installation, maintenance, and monitoring to someone else. This makes actuated feel even more like managed runners, whilst keeping the benefits of self-hosted infrastructure. We're able to offer this as part of your subscription, because once we've run the installation of the agent, there is rarely a reason to log into the host again. In the rare event of a catastrophic failure, we can have the server OS image flashed and the agent reinstalled within a few minutes.

For our compute intensive application, with testing taking 30-45 minutes, every second counts. Actuated has helped us keep our development speed up by making sure we ran on fast bare-metal servers and by helping us find bottle necks in the testing process. We were able to drop our testing time by 50% whilst saving 3-6x per month versus similar hosted GitHub Actions minutes.

Justin Gray, CTO at Toolpath

One of the downsides to using a technology like Firecracker is that it requires KVM or nested virtualisation to be available on the server. If you're using AWS already, you'll be disappointed to learn that their bare-metal hardware is not only overpriced, but it isn't suited to CI workload which require a fast processor and storage.

We put together a list of servers that we recommend and the rough costing for them, but to make it simple, you cannot beat Hetzner. Most of our customers rent bare-metal from them and we've been very pleased with the value for money and sheer performance.

- Docs: Provision a server

Content & education

There are several unresolved problems with using self-hosted runners, not to mention the security issues. We've been working on a series of blog posts to help educate teams on how to get the most out of their self-hosted runners.

- Running a local cache to reduce latency Testing the impact of a local cache for building Discourse

- Keeping costs down, and servers utilised with our metering tool Right sizing VMs for GitHub Actions

- Safely launch VMs and different OSes - How to run KVM guests in your GitHub Actions

- GitHub pays Docker.com for unlimited Docker Hub pull keys, here's how to solve the problem for self-hosted runners: Set up a registry mirror

Along with the VM usage metering tool, I mentioned earlier, which you can use on both managed and self-hosted runners, we created a free open source tool called self-actuated/actions-usage. You can use it to generate a report of your personal account's usage, or your organisation's usage over a period of time, you can even run it on a schedule as a GitHub Action. Customers have reports backed by a database, which can run queried on varying time periods and filter by repository, user, or organisation: actuated dashboard

Artificial Intelligence (AI), Machine Learning (ML) and GPUs

We started to see customer interest in AI and ML workloads for GitHub Actions and GitLab CI, but found that Firecracker could not support PCI devices such as GPUs. After spending time on R&D, we added support for cloud-hypervisor which has slightly different goals than Firecracker. Rather than focusing purely on serverless workloads, it adds support for PCI devices and is even able to run other Operating Systems than Linux.

Sponsored CI minutes for the Cloud Native Computing Foundation (CNCF)

The CNCF and Ampere Computing joined together to sponsor CI minutes for top-tier open source projects such as containerd, fluentd, Open Telemetry, cri-o, ArgoCD, Falco, eBPF and various others. In May this year we'd already run over 1.5 million CI minutes for these projects, and we're proud to be able to support the open source community in this way.

- On Running Millions of Arm CI Minutes for the CNCF

- Is the GitHub Actions self-hosted runner safe for Open Source?

Ampere is delighted to partner with Actuated and the CNCF on their ambitions of improving the state of Aarch64 software. The combination of Actuated's tools and methods for managed CI and Ampere's cloud native processors for fast and secure builds makes for a tremendous advantage to accelerate the availability of cloud native software for the Aarch64 ecosystem.

Pete Baker, VP Customer & Developer Engineering

We've also sponsored the Atuin, runc and criu open-source projects. Ellie, the maintainer of the popular Atuin tool for syncing bash history said:

"Actuated has been a lifesaver - we were really struggling with slow, emulated, hour plus ARM docker builds. With Actuated, our builds are now incredibly fast and finish in a few minutes"

Ellie Huxtable, Atuin maintainer

What's next?

If you can relate to anything we've covered here on costs, performance, security, or something else, please feel free to reach out to talk to us. We can tell you in a short period of time whether actuated would be a good fit for your team, and what results other teams like yours may have seen already. Plans run month to month, so it's relatively low risk to try actuated out on a couple of repositories to see how you like it.

Are you a GitLab CI user?

We have just published new updates for actuated for self-hosted GitLab and are looking for pilot customers. Many of the same security and management issues exist whether you're using GitHub Actions or GitLab CI, so we're excited to bring the same level of security and performance to GitLab CI.

Additional resources:

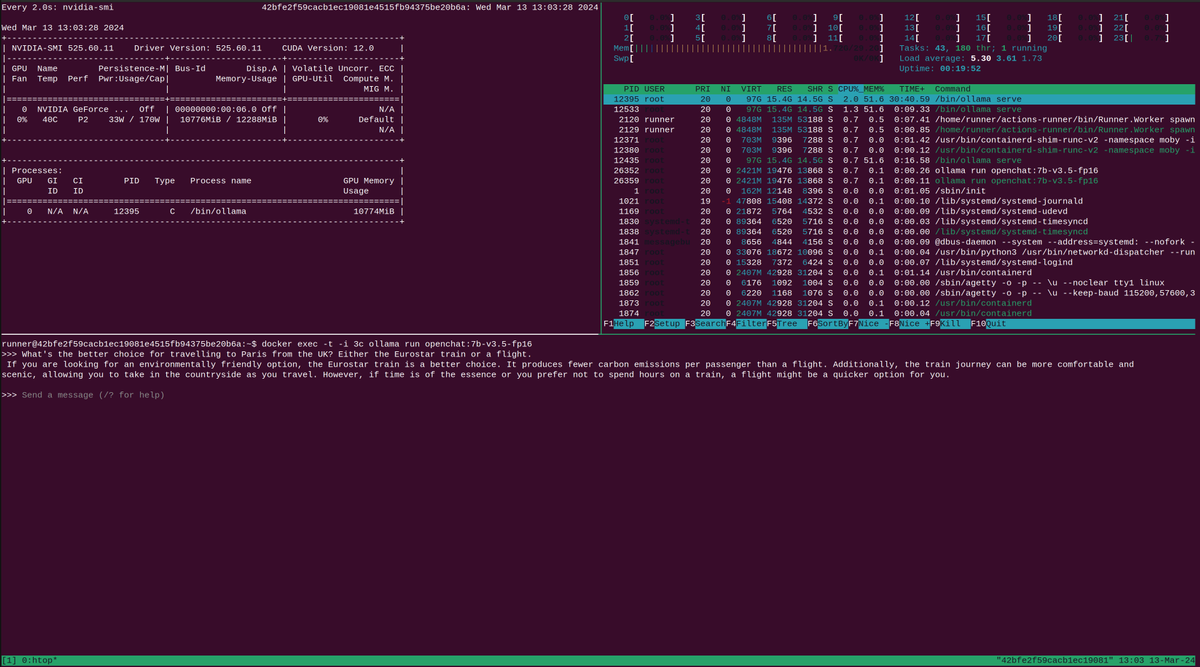

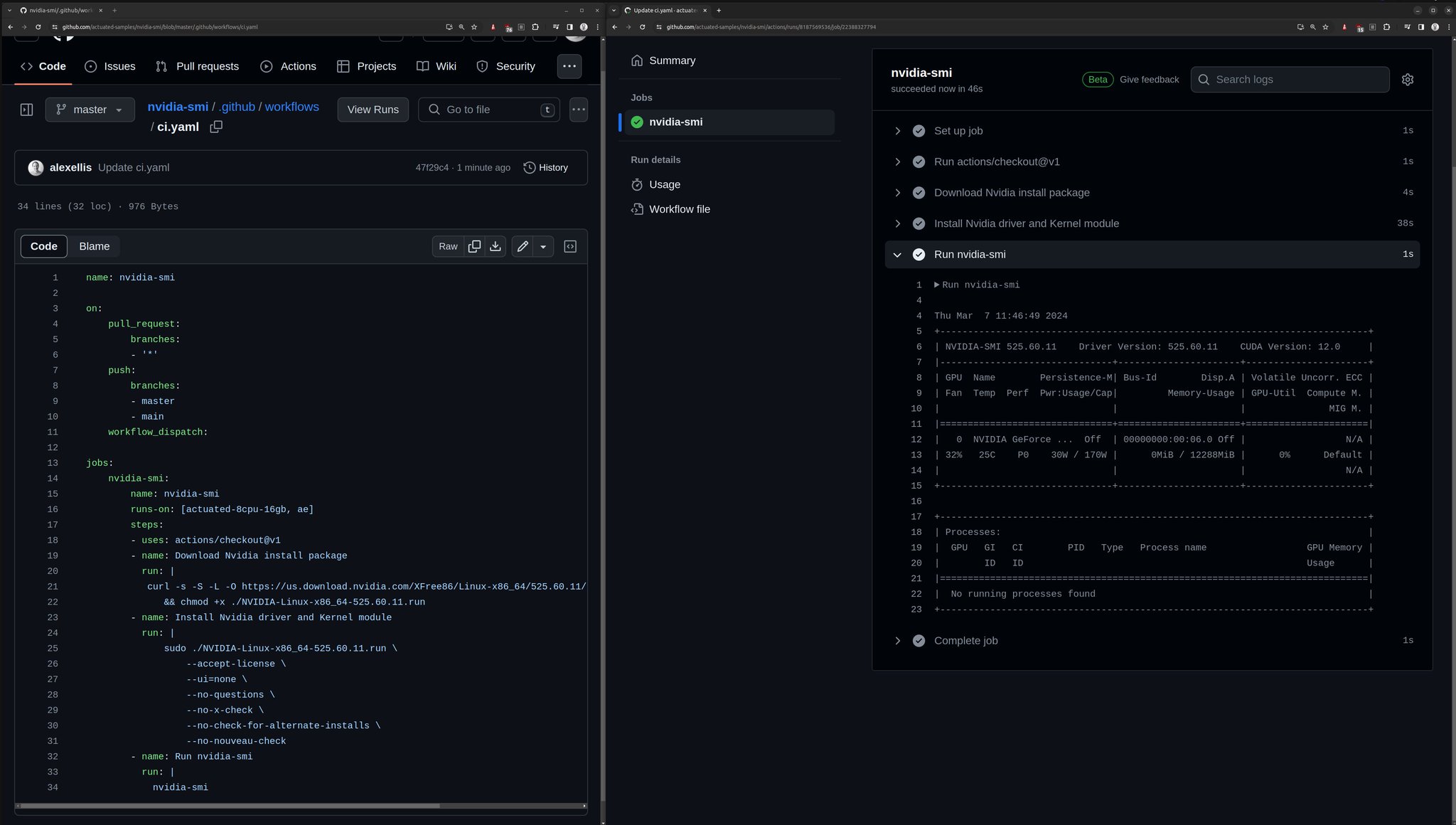

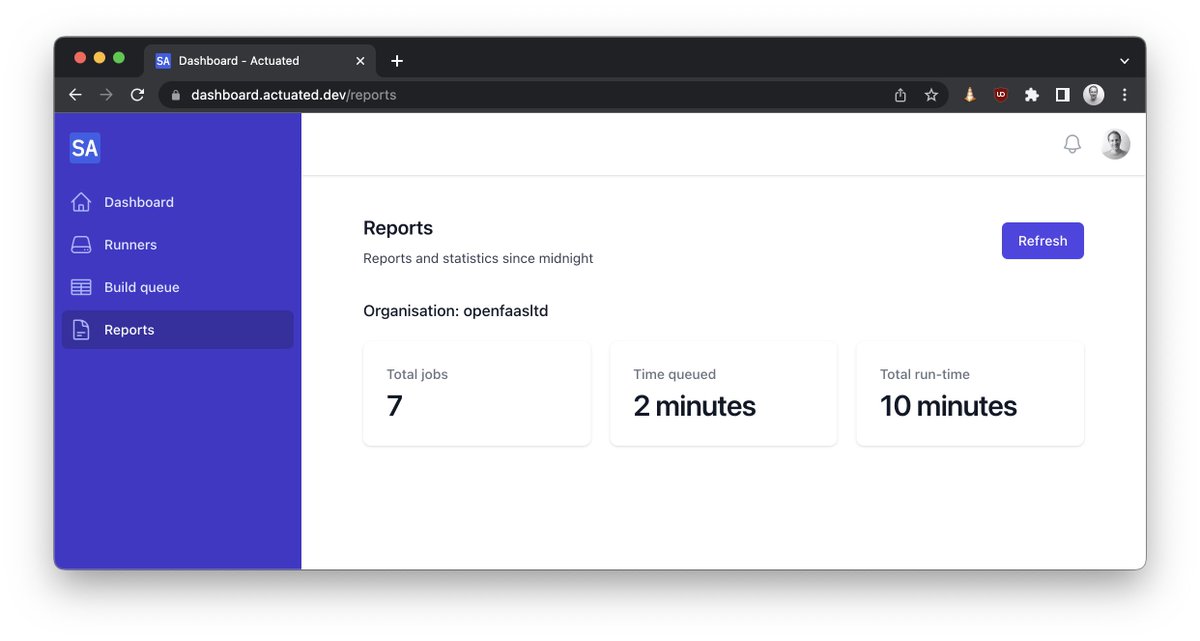

]]>Last year we introduced the tech preview for Actuated for GitLab CI, since then we've had customer interest from enterprise companies who wanted to improve their security posture and to lower overheads. Actuated reduces management overheads of self-hosted runners and provides a secure, ephemeral microVM for every job.

We've made a lot of progress since the original version and are looking for additional customers who want to deliver an improved CI experience. In this article we will give you an overview of some of the available features and how they can benefit your GitLab CI.

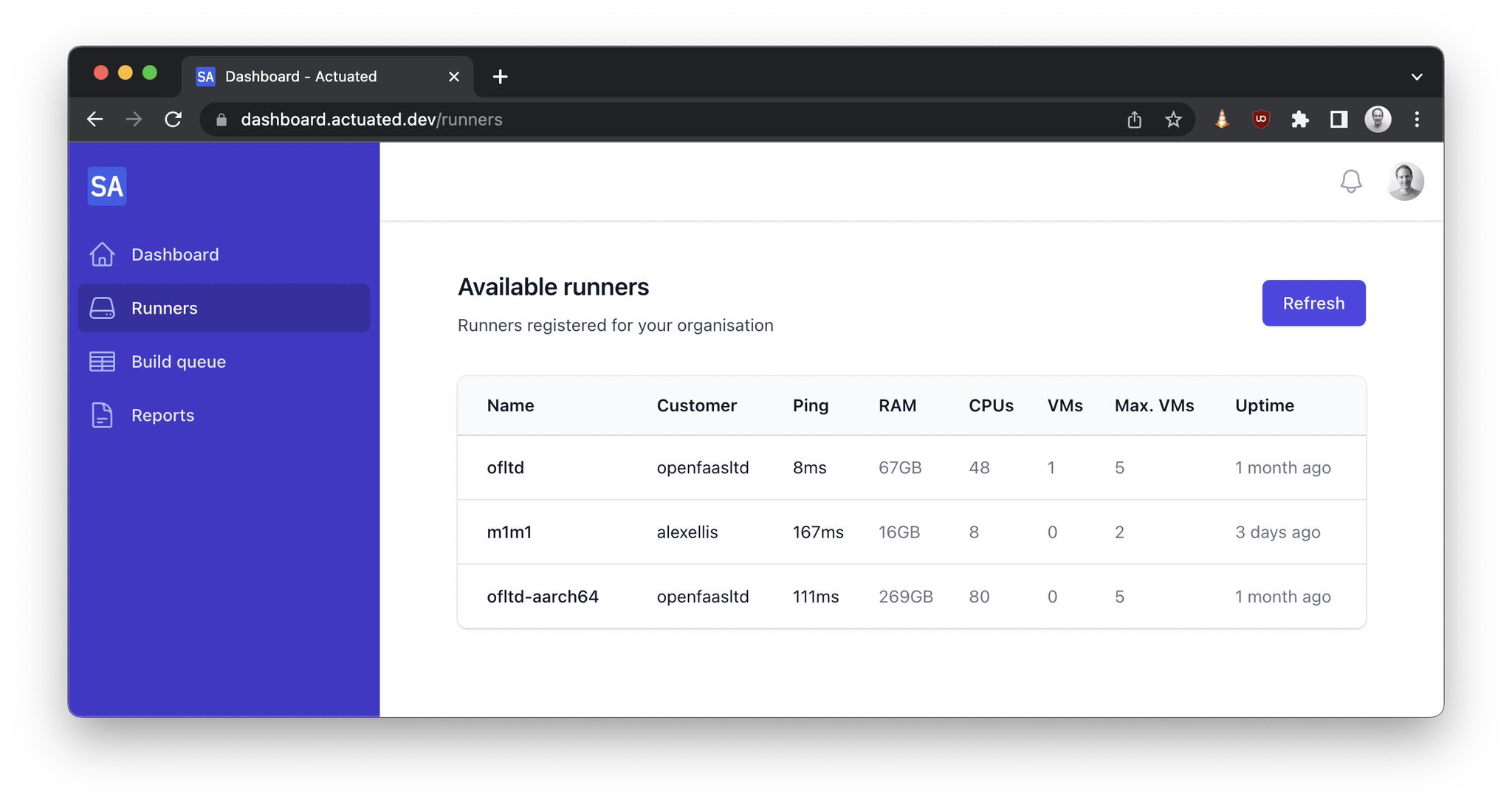

- Introduction

- Run jobs in microVMs with Actuated

- Mixed docker and shell executors

- What does an actuated server look like?

- Private peering

Why are microVMs the future of CI?

It can be challenging to run GitLab CI/CD jobs that build and publish Docker images or jobs that require extensive system access in a safe way. Docker-in-Docker (DIND) requires the docker executor to run containers in privileged mode. Using the shell executor would give a job full access to the runner host and network. They can also leave behind side effects between builds as the runner is reused.

Using both approaches causes a significant security concern and the GitLab runner security docs warn against it.

Security notice displayed by the GitLab Helm chart to explain why docker in docker is disabled by default for security purposes.

With Actuated, jobs run in ephemeral microVMs using Linux KVM for secure isolation. After the job is completed, the VM will be destroyed and removed from the GitLab instance. This allows us to safely run DIND and the shell executor in a fresh isolated environment for each job.

There are no horrible Kernel tricks or workarounds required to be able to use user namespaces, no need to change your tooling from what developers love - Docker, to Kaniko or Buildah or similar. You have sudo access and full VM with systemd available, things like Kubernetes will also work out of the box if you need them for end to end testing.

Runners get automatically added to a project and are removed again when they finish running a job.

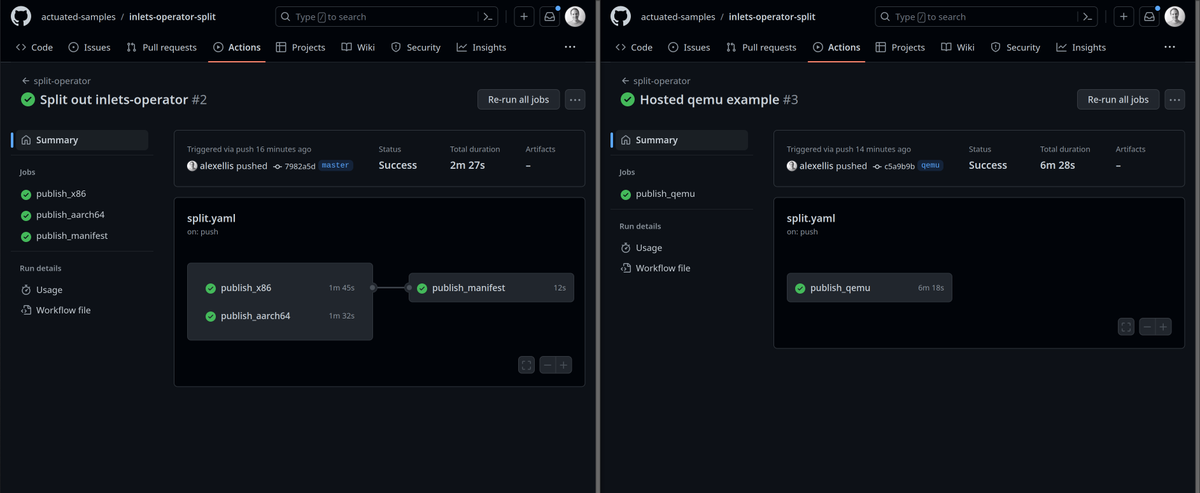

Run jobs in microVMs with Actuated

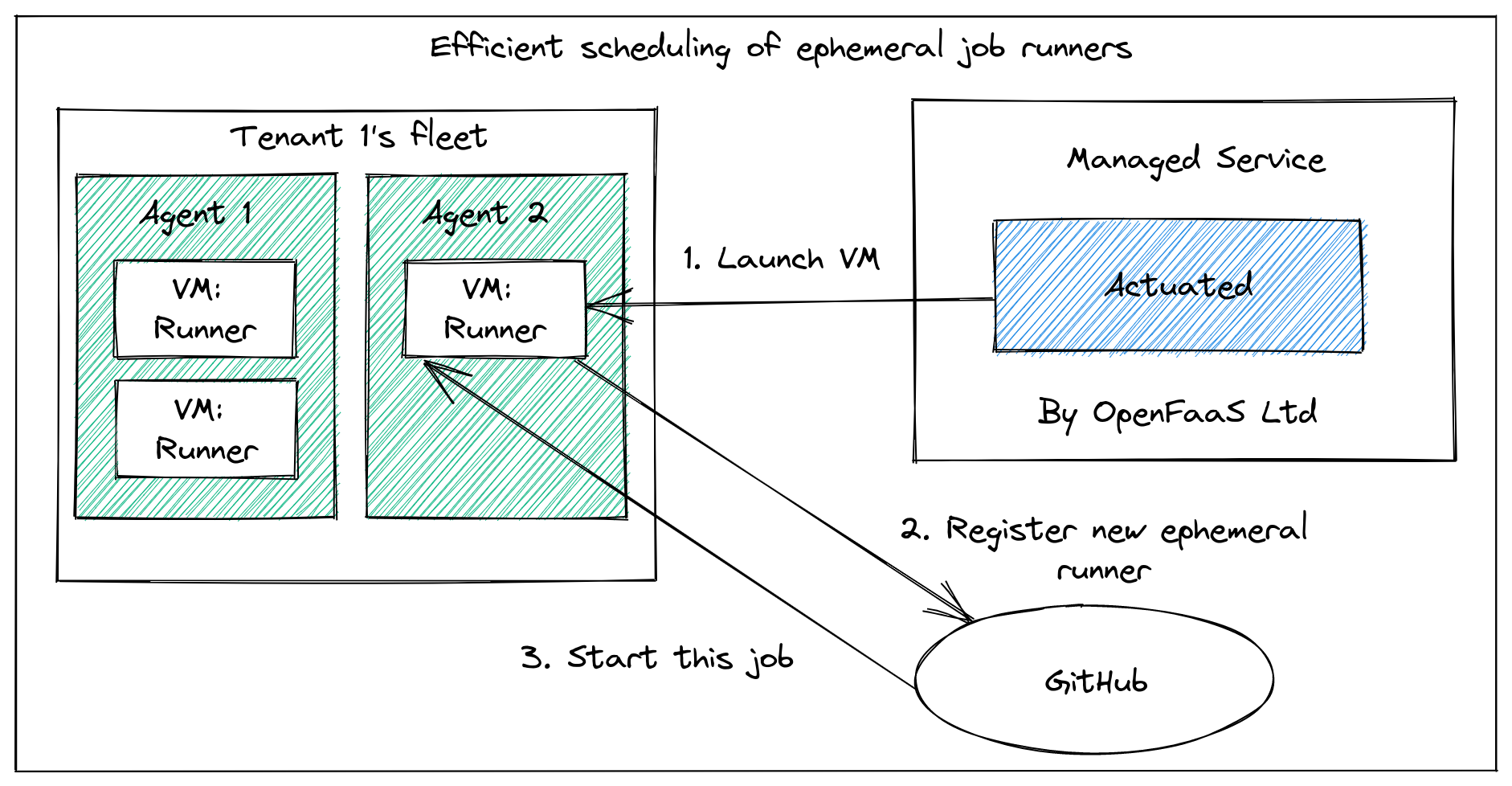

When a pipeline is triggered through a commit, merge request or in the UI the Actuated control plane gets notified through a webhook. For every job we schedule and run a new microVM and register it as a runner to the project. After the job is completed, the VM will be destroyed and removed from the GitLab instance. Scheduling and launching VMs is very fast. On average a new VM is booting up and running the job within 1 second.

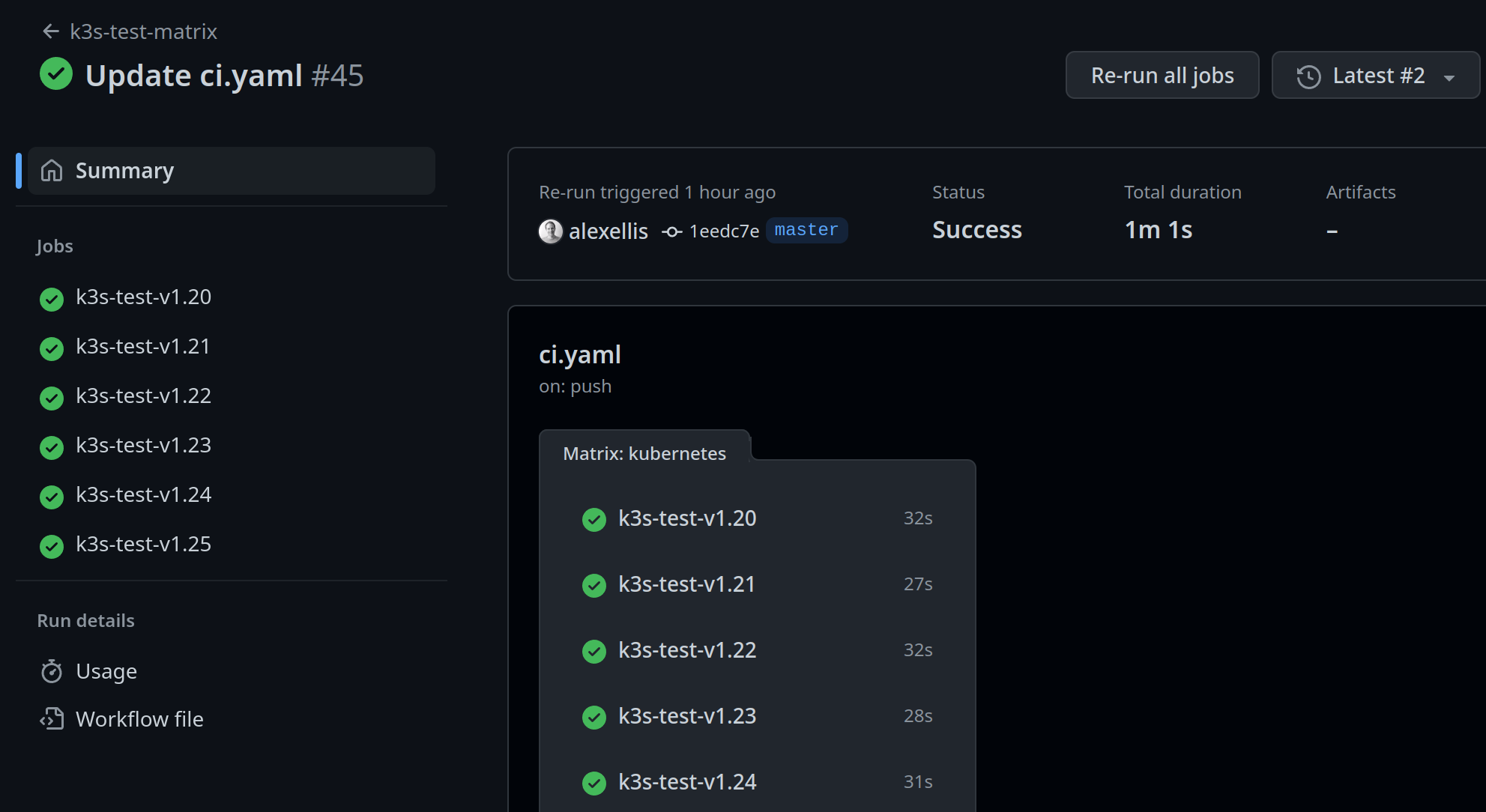

The agent will use either Firecracker or Cloud Hypervisor to launch microVMS depending on whether GPU support is required. microVMs boot almost instantly and in most cases will be faster than Kubernetes since the image is optimized and already available on each server.

To run jobs on Actuated the actuated tag has to be added to a job. One feature our customers like is the ability to configure the VM size for a job through the tag. Using the tag actuated-4cpu-8gb will schedule a VM with 4 vCPUs and 8 gigabytes of RAM.

You can pick any combination for vCPU and RAM. There's no need to pick a predefined runner size. This means that runners can be sized accordingly for the job they need to run so that the available CPU and memory resources can be used more efficiently.

Example .gitlab-ci.yaml that runs a job on Actuated runners using the docker executor:

image: ruby:2.7

services:

- postgres:9.3

before_script:

- bundle install

test:

script:

- bundle exec rake spec

tags:

- actuated-4cpu-8gb

Mixed Docker and Shell executors

GitLab supports a number of executors to run builds in different environments. With Actuated we support running jobs with the docker and shell executor.

There is no need to pre-configure the type of executors you want to use. Actuated allows you to quickly select the executor for a job by adding an additional tag. Adding the shell tag to a job will launch a VM and register the GitLab runners using the shell executor. If no tag is provided the docker executor is used by default.

build-job:

stage: build

script:

- echo "Hi $GITLAB_USER_LOGIN!"

tags:

- actuated-2cpu-4gb

- shell

With Actuated the shell executor can be used securely without leaving side effects behind that can influence job execution. A clean isolated build environment is provided for every job since the GitLab runner is started on an ephemeral VM that is removed as soon as the job has completed.

Using the shell executor in an isolated VM lets you safely run workloads like:

- Jobs that benefit from or need hardware acceleration e.g. the android emulator.

- Jobs that require extensive system access.

- Run a KinD or K3s cluster in CI for E2E testing.

These kinds of jobs can be difficult to run in a docker container or would require the container to run in privileged mode which is unsafe and advised against in GitLab runner security guidelines.

Since jobs run in ephemeral VMs with Actuated it is also possible to run the docker executor safely in privileged mode. If you are already using the docker executor in privileged mode Actuated can improve the security of your jobs without making changes to your existing pipelines.

The following .gitlab-ci.yaml runs two jobs. The first job uses the docker executor to build and push a container image for an OpenFaaS function with Docker and the faas-cli. The second job sets the additional shell tag to request Actuated to run the job with the shell executor. By running the jobs with the shell executor we get access to the full ephemeral VM that is launched for the job. This makes it easy to bootstrap a K3s Kubernetes cluster with k3sup for E2E testing the function with OpenFaaS.

stages:

- push

- e2e

variables:

DOCKER_DRIVER: overlay2

DOCKER_TLS_CERT_DIR: ""

push_job:

stage: push

image: docker:latest

before_script:

# Install dependencies: faas-cli

- apk add --no-cache git curl

- if [ -f "./faas-cli" ] ; then cp ./faas-cli /usr/local/bin/faas-cli || 0 ; fi

- if [ ! -f "/usr/local/bin/faas-cli" ] ; then apk add --no-cache curl git &&

curl -sSL https://cli.openfaas.com |

sh && chmod +x /usr/local/bin/faas-cli &&

cp /usr/local/bin/faas-cli ./faas-cli ; fi

script:

- echo $CI_JOB_TOKEN | docker login $CI_REGISTRY \

-u $CI_REGISTRY_USER \

--password-stdin

# Build and push an OpenFaaS function

- /usr/local/bin/faas-cli template pull stack

- /usr/local/bin/faas-cli publish

tags:

- actuated-4cpu-8gb

e2e_job:

stage: e2e

before_script:

# Install dependencies: faas-cli kubectl kubectx k3sup

- curl -SLs https://get.arkade.dev | sh

- export PATH=$PATH:$HOME/.arkade/bin/

- arkade get faas-cli kubectl kubectx k3sup --progress=false

script:

# Deploy a K3s cluster.

- |

mkdir -p ~/.kube/

k3sup install --local --local-path ~/.kube/config

k3sup ready

- kubectl get nodes

# Install OpenFaaS on the local cluster.

- mkdir -p ~/.openfaas && echo $OPENFAAS_LICENSE > ~/.openfaas/LICENSE

- |

arkade install openfaas \

--license-file ~/.openfaas/LICENSE \

--operator \

--clusterrole \

--jetstream \

--autoscaler